Definition: Agentic AI security refers to the discipline of identifying, mitigating, and monitoring the unique threats that arise when LLM-based agents operate autonomously: executing multi-step plans, calling external tools, and taking actions with real-world consequences. Unlike traditional application security, agentic AI security must address a semantic attack surface where malicious instructions can be embedded in any data the agent reads.

Why Agentic AI Breaks Traditional Security Models

Agentic AI systems introduce an expanded, semantic attack surface that traditional perimeter and API security were never designed to address. When an LLM can read emails, browse URLs, and execute code autonomously, every untrusted data source becomes a potential injection vector.

By 2028, Gartner predicts 25% of enterprise breaches will trace back to AI agent abuse from both external actors and malicious insiders. That prediction is not a warning about future technology. It describes the systems many teams are shipping today.

Traditional security models assume that threats arrive at defined perimeters: network edges, API endpoints, authenticated sessions. Agentic AI dismantles every one of those assumptions. An agent that browses the web, reads inbound emails, queries vector databases, and writes to downstream APIs has no perimeter. Its attack surface is everywhere it reads.

The shift is subtle but critical. A REST API receives structured input from a known caller. An LLM agent receives natural language from an unpredictable world, then decides what to do with it. The intelligence that makes agents powerful is the same property that makes them exploitable.

“By 2028, Gartner predicts 25% of enterprise breaches will be traced directly to AI agent abuse, both external and internal.”

Mapping the Agentic AI Attack Surface

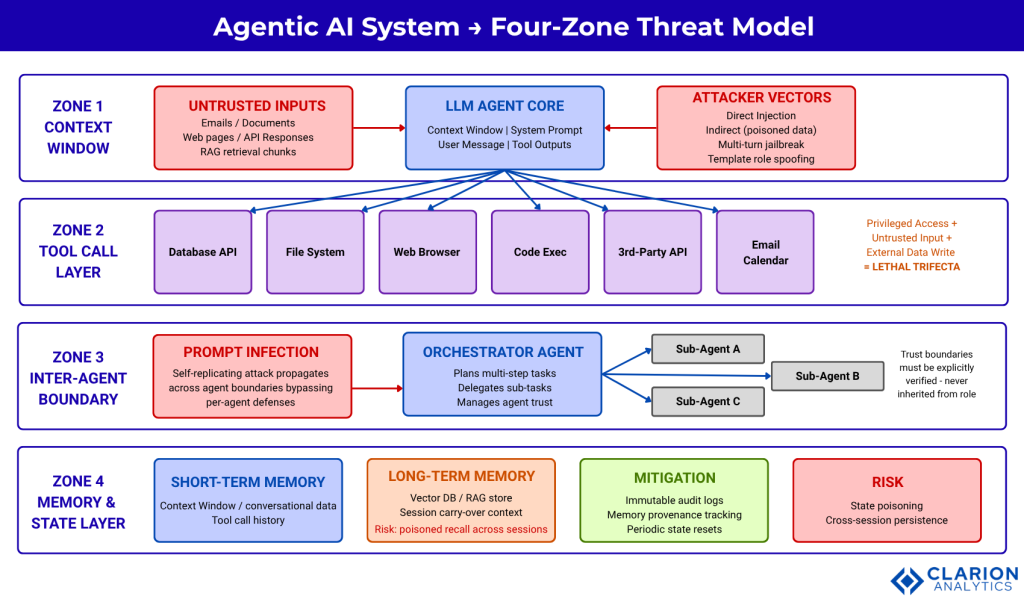

The agentic AI attack surface spans four primary zones: the context window, the tool-call layer, the inter-agent trust boundary, and the memory and state layer. Each zone has distinct threat profiles and requires different defensive controls.

Zone 1 is the context window. Every string that enters the LLM’s context, regardless of source, can contain instructions the model may follow. A document summary, a search result, an email, or an API response can all carry embedded directives. This is indirect prompt injection, and it is the primary vector for production attacks today.

Zone 2 is the tool-call layer. Agents call external tools: databases, file systems, browsers, code interpreters, email clients. Security researchers describe a “lethal trifecta” that makes this zone especially dangerous: the agent has privileged access, it processes untrusted input, and it can write data externally. Any single property creates risk. All three together enable complete system compromise from one injected instruction.

Zone 3 is the inter-agent trust boundary. Multi-agent architectures create new trust propagation paths. Research published on arXiv in 2024 introduced the concept of Prompt Infection: a self-replicating attack where malicious instructions injected into one agent’s context propagate to every agent that agent subsequently communicates with, bypassing per-agent defenses entirely.

Zone 4 is the memory and state layer. Long-running agents often persist context across sessions using vector databases or structured memory stores. Poisoning this layer creates cross-session attacks: an instruction injected in one conversation surfaces as trusted context in a future session, hours or days later.

Figure 1 caption: A four-zone threat model showing the context window, tool-call layer, inter-agent boundary, and memory layer, with annotated attack vectors at each boundary. Red outlines denote attack surfaces; green outlines denote mitigation controls. Arrows indicate data flow direction and trust degradation points.

Prompt Injection in Agentic Systems: Direct, Indirect, and Self-Replicating

Prompt injection occurs when malicious instructions embedded in external data override the agent’s original task. In multi-agent architectures, “Prompt Infection” attacks replicate these instructions across agents, bypassing defenses designed only for single-agent systems.

Direct injection is the simplest form: an attacker who controls a user-facing input crafts a prompt that overrides the system prompt or hijacks task execution. It is well-documented and most mature model providers have added some basic resistance to it.

Indirect injection is far more dangerous in production. Research from arXiv (2024) shows that when agents process external content, any text in that content can carry instructions the model treats as authoritative. A malicious actor who controls a single webpage, email template, or document in a RAG corpus can redirect every agent that reads it.

Automated injection attacks are maturing rapidly. A 2025 paper on reinforcement-learning-based prompt injection (AutoInject) demonstrated attack success rates of up to 78% against frontier models, including 22% against models specifically fine-tuned to resist injection. Template-based multi-turn attacks, where an adversary embeds forged conversation role tags in tool outputs, further raise success rates by exploiting how instruction-tuned models segment dialogue turns.

“In multi-agent systems, a single injected instruction can self-replicate across agents, turning one compromised tool call into full infrastructure access.”

Code Snippet 1: Prompt Injection Input Scanning with LLM Guard

Source: protectai/llm-guard, input_scanners/prompt_injection.py

Three lines of integration add a DeBERTa-based injection classifier between any user input and your LLM call. The scanner returns a sanitized prompt, a validity flag, and a numeric risk score. Set threshold=0.5 as a starting point and adjust based on your false-positive tolerance. This belongs in your API gateway or agent entry point, before the input reaches the model context window.

The Lethal Trifecta: Why Agent Privileges Amplify Every Threat

Security researchers identify a “lethal trifecta” in agentic AI: privileged access to sensitive systems, processing of untrusted external input, and the ability to write or share data externally. Any one of these properties creates risk. All three together enable complete system compromise from a single injected instruction.

A January 2026 analysis from Aembit points to a critical identity gap: only 10% of organizations have a well-developed strategy for managing non-human and agentic identities, despite credential abuse being the most common initial access vector in breaches according to the 2025 Verizon DBIR.

In practice, teams building agentic systems typically find that identity scoping falls through the cracks. The agent is given broad credentials because it was easier to set up that way during development. Those credentials move to production unchanged. An injected instruction then exploits them to exfiltrate data, delete records, or call external services the business never intended the agent to reach.

“Treat every LLM output as potentially adversarial. The agent that read an untrusted email five steps ago may now be about to write to your database.”

Comparison: Defensive Approaches for Production Agentic AI

| Approach / Tool | Key Strength | Main Limitation | Best Used When |

|---|---|---|---|

| Input Scanning (LLM Guard) | Detects known injection patterns at the input boundary; sub-millisecond latency with ONNX | Signature-based; novel zero-day injections may evade detection | You need a drop-in layer before any user input reaches the model |

| Dual-LLM / CaMeL Pattern | Structural defense: quarantined LLM cannot influence privileged execution paths | Higher latency and cost; requires architectural redesign | Building net-new agent pipelines where security must be enforced by design |

| Action-Scope Constraints | Prevents agents from executing arbitrary tasks outside a defined workflow envelope | Reduces agent flexibility; requires careful workflow modelling upfront | Agents deployed in narrow, well-defined domains (customer support, code review) |

| Continuous Red-Team (garak) | Systematic, automated vulnerability scanning across 100+ injection probe types in CI/CD | Detects known weaknesses; does not block live attacks | Pre-deployment gate and post-deployment regression testing on every model update |

| Least-Privilege Tool Scoping | Limits blast radius: a compromised agent cannot access resources outside its defined scope | Requires ongoing access governance; permissions drift over time | All production agentic systems, always; this is the minimum viable safeguard |

Production Hardening: A Layered Defense Playbook

Production hardening for agentic AI requires at minimum four layers: input scanning at the context boundary, least-privilege tool scoping, output validation before any downstream action, and continuous red-team scanning integrated into the CI/CD pipeline.

Layer one is input scanning. Deploy a classifier between every user input and tool output before it enters the agent’s context window. LLM Guard’s PromptInjection scanner handles this with sub-millisecond latency using ONNX inference. Pair it with an InvisibleText scanner that strips Unicode private-use-area characters, a common steganographic injection vector.

Layer two is least-privilege tool scoping. Every tool the agent calls should have a scope definition enforced at the infrastructure layer, not just in the system prompt. The tldrsec/prompt-injection-defenses catalog recommends rewriting SQL queries generated by the LLM into semantically equivalent queries that only operate on data the agent is authorized to access. Do not trust the model to self-enforce access control.

Layer three is output validation. Every response the agent generates before it takes a downstream action should pass through an output scanner. Validate that the action type matches the task context, that no PII is being written to unintended destinations, and that the response does not contain code patterns that would execute in the downstream system.

Code Snippet 2: Continuous Vulnerability Scanning with NVIDIA garak

Source: NVIDIA/garak (7.7k stars, actively maintained)

Run this as a pre-deployment gate in your CI/CD pipeline. garak sends hundreds of structured probe prompts from known injection categories and reports pass/fail rates per vector. A high failure rate on the promptinject probe before you ship is far cheaper than a breach after. Integrate with your existing test framework via the JSONL output.

Architectural Patterns That Reduce Injection Risk by Design

The most reliable structural defenses are the Dual-LLM pattern, which separates a privileged planning LLM from a quarantined execution LLM, and strict action-scope constraints that prevent agents from solving tasks outside a predefined workflow envelope.

The CaMeL defense (Debenedetti et al., 2025) applies principles from traditional software security, specifically Control Flow Integrity and Information Flow Control, to LLM agents. The Privileged LLM plans which actions to take. The Quarantined LLM executes those actions against untrusted data. A malicious instruction in external content can corrupt the quarantined context but cannot reach the privileged planning path.

A complementary approach described in Design Patterns for Securing LLM Agents (2025) constrains agent action scope so that the agent is structurally incapable of executing tasks outside its defined workflow. An agent that cannot send arbitrary emails cannot be injected into sending one. Accepting that constraint, where the use case allows, eliminates an entire class of attack.

Gartner’s April 2026 analysis recommends that software engineering leaders reinforce agent security with authentication and authorization practices tailored specifically for AI agents, not inherited from human user roles.

“Security for agentic AI is not a model problem. It is a systems design problem, and the solution requires layers.”

Frequently Asked Questions

What is prompt injection in agentic AI and why is it dangerous?

Prompt injection is an attack where malicious text embedded in external data, such as documents, emails, or web pages, overrides an LLM agent’s original instructions. It is dangerous in agentic systems because agents act autonomously, often with privileged access to databases and APIs. A successful injection can cause the agent to exfiltrate data, delete records, or call external services without triggering security alerts.

How is the attack surface for agentic AI different from traditional APIs?

Traditional API security defends defined perimeters against structured, typed inputs. Agentic AI systems read natural language from arbitrary external sources and make autonomous decisions based on that content. Every external data source the agent reads, every tool it calls, and every sub-agent it coordinates with is a potential attack surface. There is no perimeter; the agent’s intelligence is both its capability and its vulnerability.

What tools can I use to detect and block prompt injection in production?

For real-time detection, deploy LLM Guard’s PromptInjection scanner as an input gateway before any external content reaches the model context. For continuous pre-deployment scanning, run NVIDIA’s garak against your agent endpoint with the promptinject and encoding probe suites as a CI/CD gate. For a curated reference of every proposed defense, consult the tldrsec/prompt-injection-defenses GitHub repository.

Is there an architectural way to prevent prompt injection rather than just detect it?

Yes. The Dual-LLM pattern (CaMeL) structurally separates a privileged planning LLM from a quarantined execution LLM. Injected instructions in external data can corrupt the quarantined context but cannot reach the privileged planning path. Combined with strict action-scope constraints that limit what the agent can do by design, these patterns reduce injection risk without relying entirely on detection-based defenses.

How do I red-team an agentic AI system before it goes live?

Install NVIDIA garak and run it against your agent endpoint as a pre-deployment gate. Use the promptinject, encoding, and dan probe suites as a minimum. Integrate the JSONL output into your CI/CD pipeline and block deployment on critical failures. For multi-agent systems, also test trust boundary propagation manually: inject a payload into one sub-agent and verify it does not propagate to the orchestrator’s privileged context.

The Three Things Every Team Building Agents Must Get Right

The most important investments in agentic AI security are: mapping your attack surface across all four threat zones before the first line of production code, enforcing least-privilege tool scoping at the infrastructure level rather than trusting model self-restraint, and running continuous automated red-teaming as a non-negotiable CI/CD gate.

Every team that ships agents at speed discovers the same gaps after the fact: credentials that were too broad, external data sources treated as trusted, and no systematic process to verify the agent behaves safely when adversarial content arrives. These are not model problems. They are engineering and governance problems.

The good news is that the tooling exists today. LLM Guard, garak, and the architectural patterns described in current research all address real production risks with code you can deploy this week. The question is not whether to invest in agentic AI security. It is whether you invest before or after the incident.

Are you treating the agent’s context window as the boundary it actually is, or still defending the perimeter that no longer exists?