What is object detection in safety-critical environments? Object detection in safety-critical environments means identifying and localizing objects in real time under conditions where a missed detection or false positive can cause physical harm or system failure. The pipeline must meet hard latency budgets, deliver statistically bounded error rates, and operate deterministically on edge hardware. Architectures currently competing for this role include CNN-based detectors (the YOLO family) and end-to-end transformer-based detectors (RT-DETR, DEIM, RF-DETR).

Why the Architecture Decision Matters More Than the Benchmark Score

In safety-critical systems, the wrong detector does not just underperform. It creates legally and physically unacceptable failure modes. Latency spikes, non-deterministic post-processing, and missed small objects are architecture properties, not tuning problems.

The global computer vision market was valued at USD 19.78 billion in 2024 and is projected to reach USD 112.10 billion by 2035 at a 17.3% CAGR, driven by autonomous vehicles, medical imaging, and industrial automation. Every one of those verticals has a safety failure mode. An engineer picking a detector in 2025 is not choosing between convenience and performance. They are choosing between architectures with fundamentally different risk profiles.

The two dominant families are the YOLO series (CNN-based, anchor-free since YOLOv8, NMS-dependent until YOLO26) and the DETR family (transformer-based, NMS-free, historically slower but now genuinely real-time since RT-DETR’s CVPR 2024 debut). The rest of this post gives you the information to choose between them for your specific safety constraint set.

How YOLO-Family Detectors Work and Where They Break

YOLO models use a single forward pass through a convolutional backbone to predict bounding boxes and class probabilities simultaneously. Their main structural weakness in safety scenarios is Non-Maximum Suppression, a post-processing step that adds variable latency and can suppress legitimate detections in dense scenes.

The YOLO family has evolved rapidly. YOLO11 introduced C3k2 blocks and C2PSA spatial attention while retaining NMS decoding. YOLOv12 (NeurIPS 2025) layered efficient area attention and R-ELAN blocks on top, achieving 40.6% mAP at 1.64 ms on T4. YOLO26, Ultralytics’ most recent release as of early 2026, removed NMS entirely with a native end-to-end architecture, achieving 43% faster CPU inference than prior generations. The trajectory is clear: YOLO is moving toward the NMS-free end-to-end design that transformers pioneered.

“In safety systems, NMS is not a performance detail. It is a latency liability and a correctness risk under occlusion.”

For teams with hard real-time constraints on power-limited edge hardware (Jetson Orin, Hailo, Coral), YOLO11 and YOLO26 remain the dominant choice. The toolchain is mature, the TensorRT and ONNX export paths are battle-tested, and the ultralytics/ultralytics repository (55k+ GitHub stars, continuously maintained through 2026) provides a single interface for training, validation, and multi-format export.

How Transformer-Based Detectors Work and Why They Now Matter

RT-DETR replaces the NMS step with bipartite matching in the decoder, producing exactly one output per detected object. This makes end-to-end latency more predictable and eliminates the duplicate-detection failures that NMS occasionally introduces in crowded frames.

The RT-DETR paper (CVPR 2024) from Baidu’s PaddlePaddle team marked the first transformer detector to operate under true real-time constraints. Its hybrid encoder decouples intra-scale and cross-scale feature interaction, making it substantially faster than prior DETR variants. RT-DETR-R50 achieves 53.1% AP and 108 FPS on a T4 GPU, competitive with YOLO on speed while outperforming it on accuracy at equivalent model sizes. RT-DETRv3 (December 2024) pushed the R101 backbone variant to 54.6% AP, surpassing YOLOv10-X on COCO while maintaining the same latency profile.

The safety-specific advantage of the NMS-free architecture is not just speed. NMS can merge detections from overlapping objects or suppress legitimate hits when confidence scores are close. In a pedestrian detection scenario at 40 meters, that suppression is catastrophic. The RT-DETR decoder sidesteps this problem structurally.

Here is what NMS-free inference looks like in practice using the Ultralytics integration:

Source: ultralytics/ultralytics

This code runs the full detection pipeline without a separate NMS call. The decoder outputs a fixed-size set of object predictions, filtered by confidence threshold, with no iterative post-processing loop. For safety engineers, this means one fewer source of variable latency in the end-to-end pipeline.

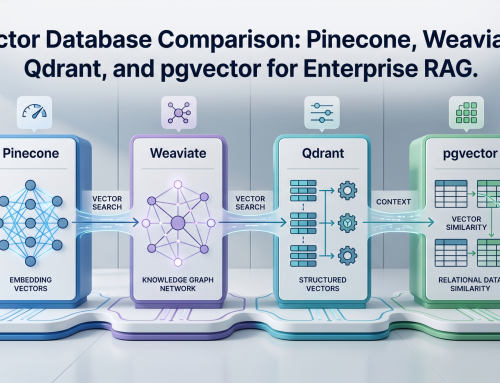

Benchmark Reality Check: Speed, Accuracy, and Edge Constraints

The comparison between architectures is not one-dimensional. Model scale, hardware target, and acceptable precision-recall tradeoff all determine the right choice for a given safety specification.

“A 3% AP gap that looks small on a leaderboard can mean the difference between detecting a pedestrian at 40 meters and missing them entirely.”

| Model / Architecture | COCO AP (val2017) | Inference Speed (T4 GPU) | NMS Required | Best Safety Use Case |

|---|---|---|---|---|

| YOLO11-N (Ultralytics) | ~39% AP | ~1.8 ms | Yes | Ultra-low-latency edge, constrained hardware |

| YOLO26-N (Ultralytics) | ~40%+ AP | ~1.5 ms (43% faster CPU) | No (end-to-end) | Edge deployment with deterministic latency |

| YOLOv12-S (attention) | 48.0% AP | 2.61 ms | Yes | Mid-range edge, attention-rich features needed |

| RT-DETR-R50 (CVPR 2024) | 53.1% AP | 9.3 ms | No | High-accuracy, moderate-latency systems |

| RT-DETRv3-R101 | 54.6% AP | ~13 ms | No | Medical imaging, high-recall safety systems |

| RT-DETRv4-L (distilled) | 55.4% AP | ~15 ms | No | Precision-critical infrastructure inspection |

Speed metrics reflect T4 GPU. CPU latency will differ significantly. Always benchmark on your target hardware before committing to an architecture.

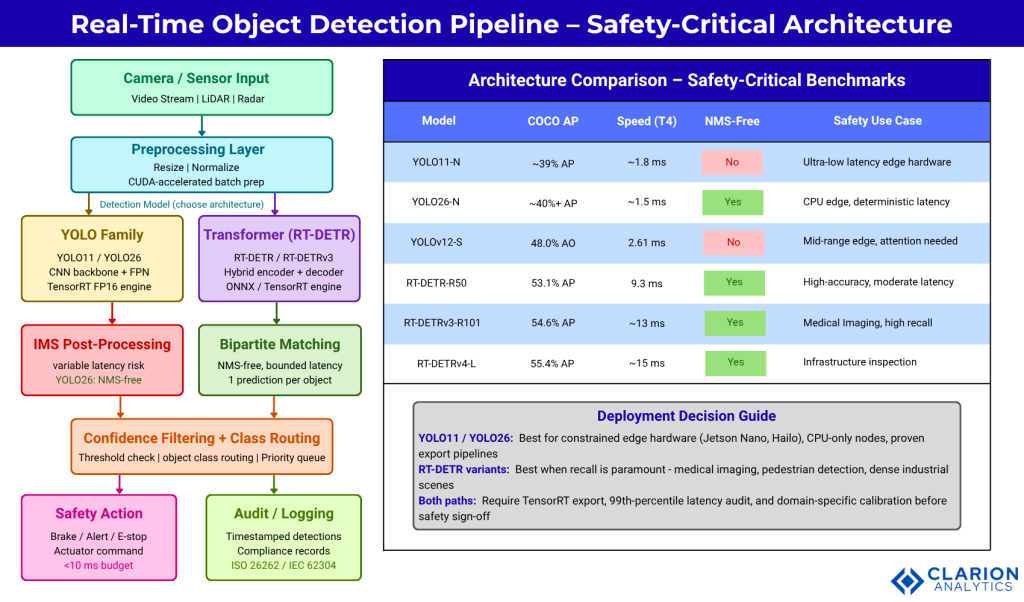

System Architecture for Safety-Critical Object Detection

A production safety detection pipeline has more moving parts than a single model choice. The architecture diagram shows the full pipeline from sensor to safety action, with the critical decision fork at the post-processing layer.

The pipeline flows from camera or sensor input through a CUDA-accelerated preprocessing layer to the detection model. At the post-processing stage, YOLO pipelines pass through NMS (variable latency, suppression risk) while RT-DETR pipelines use bipartite matching (bounded latency, one detection per object). Both paths merge at a confidence filtering and class routing layer before branching to safety actions and a parallel audit logging stream.

The audit logging branch is not optional in safety-certified systems. ISO 26262 (automotive) and IEC 62304 (medical devices) both require timestamped records of system decisions. Your detector’s output format must be auditable from the point of model inference, not just the final actuator command.

Real-World Safety Use Cases: Choosing the Right Architecture

Autonomous vehicles with tight cycle-time budgets favor YOLO11 and YOLO26 on TensorRT. Medical imaging systems requiring high recall favor RT-DETR variants. Industrial inspection with dense small-object scenes benefits from RT-DETRv3’s hierarchical positive supervision.

In practice, teams building ADAS pipelines typically find that YOLO11 or YOLO26 exported to TensorRT FP16 on a Jetson Orin gives them the 30+ FPS floor they need. Fine-tuning on domain-specific datasets (rain, night, partial occlusion) recovers the accuracy gap with transformer models that show up on generic benchmarks.

For medical imaging, the calculus reverses. NVIDIA and GE HealthCare’s 2025 collaboration on autonomous diagnostic imaging used high-recall transformer-grade pipelines because false negatives carry catastrophic clinical cost. A missed tumor on a scan is not a recoverable error. Here, the 1-2% AP advantage of RT-DETR variants over YOLO at comparable inference speeds justifies the additional compute.

Deloitte’s 2026 State of AI in the Enterprise report notes that AI adoption is most advanced in manufacturing, logistics, and defense, precisely the sectors where detection pipelines run on edge hardware with strict power and latency envelopes. Only 14% of organizations surveyed had production-ready autonomous AI solutions, underscoring that the production deployment gap is the engineering challenge of the next three years, not model selection alone.

“The model that wins on COCO may not be the model that keeps the production line safe. Domain-specific fine-tuning and latency auditing are non-negotiable.”

Implementation Path: From Model to Safety-Certified Inference

Safety-critical deployment requires TensorRT FP16 export for deterministic latency, INT8 calibration for power-constrained edge nodes, a latency budget audit at the 99th percentile, and documented evaluation against domain-specific failure modes.

Here is the YOLO11 export workflow that bridges PyTorch training to a deterministic edge engine:

Source: ultralytics/ultralytics

This two-step process serializes the model graph into a TensorRT engine optimized for your specific GPU. The half=True flag enables FP16 precision, cutting memory bandwidth in half with negligible accuracy loss on detection tasks. The resulting .engine file runs at hardware-native speed with deterministic latency, critical for any safety system that needs to prove it will never spike above its cycle time budget.

“Exporting to TensorRT is not optional for safety. It is the step that converts a research model into an auditable, bounded-latency system.”

For INT8 calibration on power-constrained nodes, collect 500 representative calibration frames from your target domain and pass them to the TensorRT calibration API. Do not use ImageNet or COCO frames. Calibrate on your actual operating environment.

Frequently Asked Questions

Which is faster on edge hardware, YOLO or RT-DETR? YOLO models are generally faster at equivalent model size on constrained edge hardware. YOLO11-N runs under 2 ms on a T4 GPU, while RT-DETR-R50 runs at about 9 ms. However, YOLO26’s NMS-free architecture closes the post-processing gap, and on NVIDIA Jetson with TensorRT, both can meet 30 FPS requirements at different accuracy levels.

Does removing NMS with RT-DETR actually improve safety outcomes? Yes, in dense-object scenarios. NMS can suppress valid detections when two objects have overlapping bounding boxes and similar confidence scores. RT-DETR’s bipartite matching guarantees one unique prediction per object. In pedestrian-dense or industrial scenes, this structural guarantee reduces false negatives compared to NMS-based pipelines at equivalent confidence thresholds.

Can YOLO models match transformer accuracy for small-object detection? YOLOv12 and YOLO11 with spatial attention modules have closed much of the small-object gap. YOLOv12-S beats RT-DETR-R18 on COCO while running 42% faster. However, for very small or distant objects in safety scenarios (pedestrians beyond 50 meters, defects under 32 pixels), RT-DETRv3’s hierarchical dense positive supervision consistently outperforms YOLO variants on domain-specific benchmarks.

What model format should I use for safety-critical edge deployment? TensorRT engine files (.engine) for NVIDIA hardware and ONNX for cross-platform interoperability. Never deploy raw PyTorch .pt files to a production safety system. The TensorRT path gives you FP16 and INT8 precision options, deterministic graph execution, and measurable latency bounds. The Linaom1214/TensorRT-For-YOLO-Series repository provides tested conversion scripts for all major YOLO variants.

How do I choose between YOLO11, YOLO26, and RT-DETR for my project? Use YOLO11 if you need proven stability and multi-task support (detection, segmentation, pose) on existing Ultralytics pipelines. Use YOLO26 if your deployment target is CPU-only or very low-power edge hardware requiring NMS-free determinism. Choose RT-DETR variants when your safety specification requires the highest achievable recall and you have moderate GPU headroom, particularly in medical imaging or high-density pedestrian detection where missed objects carry the highest risk.

Conclusion: Three Decisions That Define Your Detector Choice

Three insights from this analysis should shape your architecture decision. First, NMS is a correctness risk in dense scenes, not just a speed overhead. Both YOLO26 and RT-DETR now offer NMS-free paths, and safety systems should use one of them. Second, benchmark AP scores are starting points, not deployment verdicts. A model with 2% lower COCO AP but 40% lower 99th-percentile latency on your target hardware may be the safer choice. Third, the production deployment gap is real: only 14% of organizations have production-ready autonomous AI, which means most teams are earlier in this process than they think. Export to TensorRT, calibrate on your domain, and measure latency under load before you finalize any architecture decision.

Which constraint does your safety specification prioritize first: latency, recall, or hardware portability?