What Is Multilingual AI Customer Service?

Multilingual AI customer service refers to the deployment of voice and text artificial intelligence systems capable of understanding, processing, and responding in two or more languages within a single customer experience platform. In an APAC context, this spans automated speech recognition (ASR), natural language processing (NLP), and omnichannel routing across languages including Mandarin, Bahasa Indonesia, Malay, Thai, Tagalog, and Vietnamese, as well as their regional dialects.

Why APAC Is the World’s Hardest Market for CX AI

APAC spans more than 2,300 living languages across 48 countries, making it the most linguistically complex CX market on earth. A single AI model trained on standard English or Mandarin will fail the majority of real-world customer interactions in markets like Indonesia, the Philippines, or Vietnam.

The scale of the challenge is not academic. Grand View Research (2024) found that APAC already accounts for 85% of global retail chatbot spending despite representing only 53% of the global population. The same data confirms that 74% of global businesses cite multilingual support as a critical requirement for their AI chatbot implementations. Yet most enterprise deployments still begin with a monolingual model and retrofit language support as an afterthought.

The result is predictable: containment rates collapse the moment a customer switches from standard Mandarin to Cantonese, from formal Bahasa Indonesia to Javanese-inflected colloquial speech, or from English to Singlish. These are not edge cases. They are the majority of real customer conversations in the region.

“74% of global businesses cite multilingual support as a critical requirement for their AI implementations, yet most APAC deployments still start with an English-first model.”

Meanwhile, the executive pressure to act is intensifying. Gartner’s December 2024 survey of 187 customer service leaders found that 85% will explore or pilot a customer-facing conversational GenAI solution in 2025, with 44% specifically exploring voice AI. More than 75% said they feel direct pressure from executive leadership to implement GenAI. The window for deliberate, well-architected multilingual AI deployment is measured in months, not years.

The Four Core Use Cases Driving APAC Adoption

The four highest-ROI use cases for multilingual AI in APAC contact centres are: automated voice self-service in local languages, real-time agent assist with language coaching, cross-lingual chat and messaging automation, and intent-based omnichannel routing.

Voice IVR and Conversational Self-Service replaces static touch-tone menus with natural language dialogue in the customer’s own language. A telco operating across Indonesia, Malaysia, and Singapore deploys a single conversational AI layer that auto-detects Bahasa Indonesia, Bahasa Melayu, Mandarin, and Tamil, routing each call to the correct knowledge domain. McKinsey’s 2025 contact centre analysis shows leaders now aim to automate more than 60% of voice interactions, and that Gen AI-enabled agents already achieve a 14% increase in issue resolution per hour.

Real-Time Agent Assist surfaces suggested responses, policy lookups, and escalation prompts to human agents in their working language, while the customer speaks in theirs. An agent in Manila handling a Mandarin-speaking customer from Hong Kong receives translated intent summaries and suggested replies without switching screens.

Cross-Lingual Chat and Messaging covers platforms dominant in APAC: WeChat in China, LINE in Thailand and Japan, WhatsApp across Southeast Asia, and KakaoTalk in Korea. Each platform carries its own conversational norms, character limits, and code-switching patterns. A unified AI layer must handle all of them.

Omnichannel Intent Routing uses language detection and intent classification to place the customer in the correct queue on the first attempt. Accenture’s 2024 research confirms that AI-led companies achieve 2.5x higher revenue growth than peers, in large part because they eliminate the transfer loops that destroy customer satisfaction.

Architecture for a Multilingual AI Contact Centre

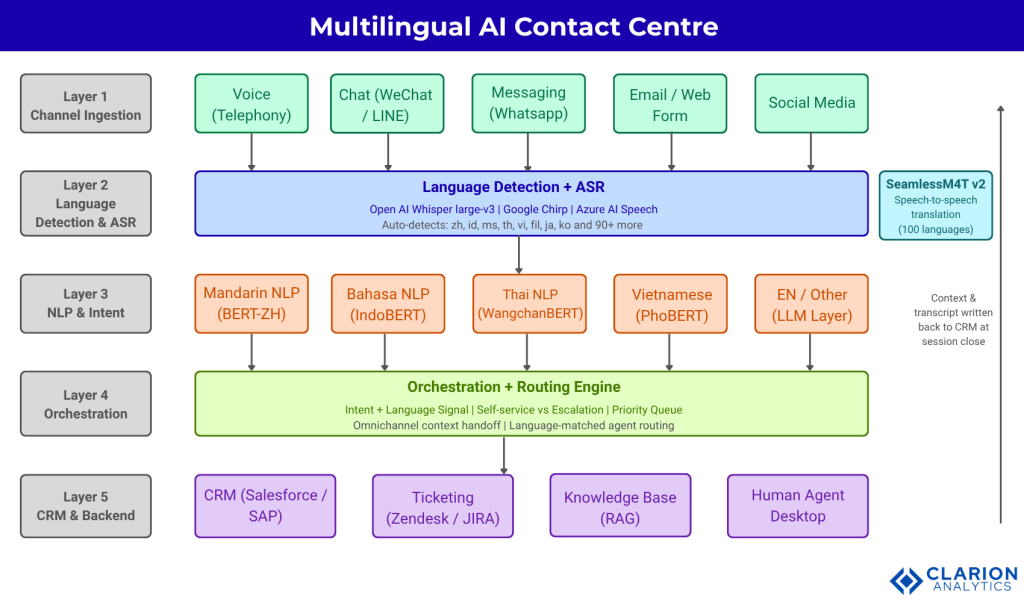

A production-ready multilingual AI contact centre stack has five layers: channel ingestion, language detection and ASR, NLP and intent classification, orchestration and routing, and CRM and backend integration. Each layer must be independently configurable per language.

Fig 1. Five-layer multilingual AI contact centre architecture for APAC markets. Customer voice or text enters via any channel (Layer 1), passes through automatic language detection and ASR (Layer 2), then routes to a language-specific NLP engine for intent classification (Layer 3). The orchestration layer (Layer 4) uses intent and language signal to resolve self-service or escalate with full context to CRM and agent desktop (Layer 5). Context and transcript are written back to the CRM at session close, creating a durable multilingual interaction record.

Layer 1 (Channel Ingestion) normalises input from telephony, chat, messaging, email, and social into a unified stream. Voice is converted to audio chunks; text is normalised for encoding (critical for Traditional vs Simplified Chinese and Thai script).

Layer 2 (Language Detection and ASR) is where most deployments encounter their first major failure point. Off-the-shelf ASR models perform well on high-resource languages like Mandarin and standard Indonesian but degrade significantly on regional dialects. Adila et al. (2024) demonstrated that multilingual ASR models evaluated on Indonesian speech with real-world acoustic variability, including regional accent variation and background noise, show meaningful performance gaps compared to clean benchmark scores.

Layer 3 (NLP and Intent Classification) must be language-specific, not language-agnostic. A single multilingual BERT model will not match the accuracy of a language-native model fine-tuned on domain-specific contact centre data. Teams that deploy IndoBERT for Bahasa queries, WangchanBERT for Thai, and PhoBERT for Vietnamese consistently achieve higher intent accuracy than those using a single multilingual model.

Layer 4 (Orchestration) is the decision engine. It reads the language signal and intent from Layer 3 and determines whether the interaction is resolved by self-service, escalated to a human, or transferred to a specialist queue. The orchestration layer also manages context handoff, ensuring the agent receiving an escalated call sees the full transcript in their working language.

Layer 5 (CRM and Backend Integration) closes the loop. Every interaction, regardless of language, writes a structured summary back to the CRM, updating the customer record in a consistent schema. This is the layer most teams underestimate in planning, and it is the layer most responsible for delayed go-live timelines.

Code Snippet 1: Whisper Multilingual Language Detection and Transcription Source: openai/whisper, https://github.com/openai/whisper

This snippet loads Whisper large-v3 and automatically identifies the spoken language of an incoming call before transcription begins. For an APAC contact centre receiving calls in Mandarin, Bahasa Indonesia, Malay, or Thai, this removes the need for separate telephony queues per language. The detected language code (for example, ‘id’ for Indonesian or ‘zh’ for Mandarin) then passes downstream to Layer 3 to select the correct NLP model.

“A voice AI model fine-tuned on formal Bahasa Indonesia will misclassify roughly one-third of real customer queries from Java or Sumatra, because regional dialect variance is not an edge case. It is the norm.”

Choosing the Right Language Models for APAC

For APAC deployments, the most production-proven options are OpenAI Whisper for ASR, Meta’s SeamlessM4T for cross-lingual speech translation, and language-specific BERT variants such as IndoBERT, WangchanBERT, and PhoBERT for NLP intent classification.

| Option | Key Strength | Best Used When |

|---|---|---|

| OpenAI Whisper (large-v3) | Zero-shot multilingual ASR across 99 languages; robust to accent and background noise | You need a single voice input layer that auto-detects language without pre-routing calls |

| Meta SeamlessM4T v2 | End-to-end speech-to-speech and speech-to-text translation in ~100 languages with prosody preservation | You have multilingual agents or need real-time cross-language communication within a single call |

| IndoBERT / PhoBERT / WangchanBERT | Language-native BERT models outperform multilingual models on intent and NER in Bahasa, Vietnamese, and Thai | You need high-accuracy NLP for a specific APAC language rather than broad-coverage general performance |

| Google Chirp / Azure AI Speech (APAC) | Enterprise SLA, managed infrastructure, low-latency streaming ASR with regional data residency | You need managed cloud ASR with guaranteed uptime and regulatory compliance across APAC markets |

| Commercial LLMs (GPT-4o, Claude, Gemini) | Strong multilingual generative response; handles code-switching such as Singlish and Manglish | You need a generative response layer beyond classification, handling complaint resolution or dynamic FAQs |

Meta’s SeamlessM4T, released at NeurIPS 2023 and updated to v2 in 2024, sets the current state of the art for direct speech-to-text translation, achieving a 20% BLEU improvement over prior systems across ~100 languages including APAC priority languages. The facebookresearch/seamless_communication repository (11,700 stars, actively maintained) provides the production-ready implementation.

For Bahasa-specific NLP, the IndoNLP/indonlu benchmark remains the standard evaluation framework, covering 12 downstream tasks across formal and colloquial Indonesian. Winata et al. (NusaX, EACL 2023) demonstrated that NLP models trained only on standard Bahasa Indonesia fail dramatically on the 10 major regional language variants spoken by the majority of the Indonesian population, including Javanese, Sundanese, and Balinese speakers.

Code Snippet 2: IndoBERT Intent Classification for Bahasa Customer Queries Source: IndoNLP/indonlu, https://github.com/IndoNLP/indonlu

This snippet runs a Bahasa Indonesia customer query through IndoBERT for intent classification. In a live contact centre, this runs immediately after ASR transcription and determines whether the customer routes to complaint handling, a self-service billing flow, or a human escalation queue. IndoBERT outperforms generic multilingual BERT models by 8% or more on Indonesian intent and sentiment tasks when evaluated against the IndoNLU benchmark.

Implementation Roadmap: From Pilot to Production

APAC multilingual AI deployments succeed when they follow a three-phase roadmap: start with one high-volume language and channel, validate accuracy against a dialect-representative test set, then add languages incrementally using a shared orchestration layer rather than siloed stacks per language.

In practice, teams building this typically find that the language coverage question gets answered too early and too optimistically. The instinct is to build for all target languages simultaneously. The result is a system that performs adequately in none of them because the training data, the test sets, and the dialect coverage are all spread too thin.

Phase 1: Single-Language, Single-Channel Pilot (Weeks 1 to 8)

Choose the highest-volume language and the channel with the cleanest data. Build the full Layer 1 to Layer 5 stack for that language. Validate ASR word error rate (WER) against a test set that includes regional accent variation, not just clean studio speech. The acceptable WER threshold for production voice AI varies by use case, but 15% or below is the working benchmark for APAC consumer contact centres. Set intent classification accuracy targets before selecting models. 85% accuracy on a held-out test set is the minimum viable threshold; 90% or above is the production target.

Phase 2: Language Expansion with Shared Orchestration (Weeks 9 to 20)

Add language two and three by plugging new ASR and NLP models into the existing orchestration layer. The orchestration layer must not need to know which language it is handling. It receives a language code and an intent signal and routes accordingly. Any architecture where the orchestration layer contains language-specific logic will not scale beyond three or four languages without becoming unmaintainable.

Phase 3: Dialect Hardening and Continuous Learning (Ongoing)

Collect real interaction data from production and use it to fine-tune ASR and NLP models against actual dialect patterns. Gartner (March 2025) projects that by 2029, agentic AI will autonomously resolve 80% of common customer service issues. Reaching that benchmark in APAC requires ongoing dialect-representative training, not a one-time model selection.

Data residency and regulatory compliance are non-negotiable in APAC. Indonesia’s Personal Data Protection Law, Thailand’s PDPA, and China’s PIPL each impose constraints on where voice data can be processed and stored. Build data residency requirements into the architecture at Phase 1, not Phase 3.

“Teams building multilingual AI for APAC consistently underestimate one thing: the gap between a demo that passes QA in Singapore English and a model that handles a Surabaya customer mid-complaint in Javanese-inflected Bahasa.”

Measuring Success: KPIs That Actually Matter

The most meaningful KPIs for multilingual AI in APAC contact centres are: AI containment rate by language, word error rate per dialect, time-to-intent, human escalation rate, and CSAT delta between AI-handled and human-handled interactions.

Containment rate without language segmentation is a misleading metric. A system that achieves 70% containment overall but only 45% for Bahasa Indonesia and 30% for Vietnamese is not a multilingual system. It is a Mandarin and English system with incomplete language support. Report containment by language from day one.

WER per dialect is the leading indicator for voice AI quality. If Indonesian ASR is returning high WER on calls from East Java, the downstream NLP layer is classifying noise, not intent. Fix the ASR layer before optimising the NLP layer.

CSAT delta measures the satisfaction difference between customers resolved by AI and those escalated to humans. In a well-tuned system, this delta should be positive or neutral. A negative delta, where customers handled by AI report lower satisfaction than those handled by humans, indicates the AI is handling cases it cannot resolve, which means escalation thresholds need recalibration.

Accenture’s 2024 “Reinventing Enterprise Operations” research, surveying 2,000 executives across 12 countries and 15 industries, found that AI-led companies achieve 2.4x greater productivity than peers. That productivity gain in a contact centre context translates directly to handling more volume in more languages without proportional headcount growth.

Frequently Asked Questions

What languages should an APAC contact centre prioritise for AI deployment first?

Prioritise by interaction volume and available training data. For most APAC enterprises, Mandarin (Simplified), Bahasa Indonesia, and English cover 60 to 70% of total contact volume. Deploy these three first with a full five-layer architecture, then expand to Thai, Vietnamese, and Tagalog in subsequent phases. Avoid launching languages where you have fewer than 5,000 annotated training samples for intent classification.

How does voice AI handle code-switching, for example a customer mixing Mandarin and English?

Modern multilingual ASR models including Whisper large-v3 and Google Chirp handle code-switching by transcribing in the dominant language and passing through the secondary language tokens. The NLP layer must be trained on code-switched examples for the target market, for example Singlish (Singapore), Manglish (Malaysia), and Taglish (Philippines). Commercial LLMs such as GPT-4o and Claude handle code-switched text well at the generative response layer.

What accuracy benchmark should Bahasa Indonesia voice AI hit before going live?

The working industry benchmark is 15% or below word error rate (WER) on a test set that includes regional accent variation from at least three major Indonesian island groups: Java, Sumatra, and Sulawesi. Intent classification accuracy should reach 85% minimum on a held-out test set before production launch. Models achieving below these thresholds produce enough misroutes to increase, not decrease, human escalation volume.

How do I connect multilingual AI to my existing CRM and telephony platform?

The orchestration layer (Layer 4) handles CRM integration via API. Most enterprise CRMs including Salesforce, SAP, and Zendesk expose REST APIs that accept structured intent and language data from the AI layer. Telephony integration uses SIP trunking for voice and webhooks for messaging channels. The critical requirement is that the CRM schema is language-agnostic: store customer intent as a classified field, not as raw transcribed text in a language-specific column.

Is open-source ASR like Whisper good enough for enterprise contact centres?

Whisper large-v3 is production-grade for most APAC contact centre use cases. It handles 99 languages including all major APAC languages, is robust to background noise, and runs on-premise for data residency compliance. The trade-offs versus managed cloud ASR (Google Chirp, Azure AI Speech) are: Whisper requires your own inference infrastructure and does not come with an enterprise SLA. For regulated industries such as banking and healthcare in markets with strict data localisation laws, on-premise Whisper is often the only compliant option.

Conclusion

Three insights define every successful multilingual AI customer service deployment in APAC. First, language coverage is not the same as language quality: supporting 10 languages at 60% accuracy delivers worse outcomes than supporting 4 languages at 90% accuracy. Second, dialect variance is structural, not anecdotal: the gap between formal written Bahasa and the colloquial speech patterns of 270 million Indonesian customers is an engineering problem that requires dialect-representative training data, not a larger general model. Third, the orchestration layer is the architecture: any system where language-specific logic lives in the routing engine rather than in the model layer will collapse under the weight of language expansion.

Gartner projects that by 2029, agentic AI will autonomously resolve 80% of common customer service issues. In APAC, that ceiling is only reachable for contact centres that treat language as a first-class engineering constraint from day one.

The question is not whether to deploy multilingual AI customer service. It is whether your architecture can scale to the next language without rebuilding the last one.