DEFINITION: Explainable AI (XAI) refers to a set of methods, tools, and architectural patterns that make machine learning model outputs interpretable to human stakeholders. In regulated industries, XAI is not optional: it is the technical mechanism for satisfying transparency obligations imposed by frameworks such as the MAS AIRG (Singapore), the RBI FREEAI committee (India), and APRA’s emerging AI governance guidance (Australia). A production-grade XAI system generates explanations at inference time, logs them for audit, and surfaces them in formats meaningful to both technical and non-technical audiences.

The Explainability Gap Is a Production Problem

According to McKinsey (2024), 40 percent of organisations surveyed identified explainability as a key risk in adopting generative AI. Only 17 percent said they were actively mitigating it. That gap is not a strategy problem. It is an engineering problem. XAI production regulated industries frameworks exist, the tooling is mature, and the regulatory mandate is now explicit. The bottleneck is implementation.

Teams in APAC financial services, insurance, and healthcare are operating under mounting pressure. Regulators are no longer signalling intent; they are issuing timelines. The Monetary Authority of Singapore published its AI Risk Management Guidelines (AIRG) in 2025. India’s Reserve Bank released the FREEAI committee report the same year. Australia’s APRA has flagged explainability as a direct obligation under its Financial Accountability Regime (FAR). The question is no longer whether to build XAI into production. It is how to do it without wrecking your inference latency.

This post walks through the technical decisions that matter: which explainability method to pick for which model type, how to architect the explanation pipeline so it does not slow down your API, and how to translate SHAP values and anchor rules into outputs that actually satisfy a compliance audit.

The explainability gap is not a strategy problem; it is an engineering problem. The tooling is mature. The regulatory mandate is explicit. What is missing is implementation.

Why APAC Regulators Now Mandate It

MAS, the RBI, and APRA have each issued or proposed AI governance frameworks that specifically name transparency and explainability as lifecycle controls for financial institutions. These are not high-level principles documents; they prescribe operational requirements with audit implications.

Singapore: MAS AIRG 2025

MAS AIRG requires financial institutions to implement proportionate controls across the full AI lifecycle, with transparency and explainability listed explicitly alongside data management, fairness, and human oversight. KPMG analysis of the guidelines notes a 12-month transition period from the issuance date, which means many Singapore-licensed firms are already in implementation mode.

India: RBI FREEAI and the Transparency Mandate

The RBI FREEAI committee (2025) proposes mandatory algorithmic audits, board-level AI oversight committees, and explicit requirements for financial institutions to explain AI-driven decisions to affected customers. High-risk deployments including credit scoring and healthcare diagnostics will likely face mandatory pre-deployment assessments.

Australia: APRA and the FAR Implications

MinterEllison’s analysis (2025) of APRA’s Financial Accountability Regime notes that while APRA has not yet issued specific AI guidance, a robust AI governance framework will require that credit scoring and pricing decisions are explainable and do not unfairly discriminate against customers.

BIS/HKMA Project Noor: Regional XAI Prototyping

The BIS Innovation Hub Project Noor (August 2025), run in collaboration with the Hong Kong Monetary Authority and the FCA, specifically prototypes explainable AI techniques for financial supervisors. This signals that regulators themselves are building XAI capabilities to scrutinise financial institution models.

Regulators are no longer signalling intent; they are issuing timelines. Singapore’s MAS AIRG is live. India’s RBI FREEAI is in implementation. Australia’s APRA is following. The compliance clock is running.

Where XAI Delivers the Most Value in Practice

In APAC financial services, XAI is most commonly deployed in credit scoring, fraud detection, insurance underwriting, and clinical triage, where black-box decisions carry regulatory or legal liability. Each use case has a different explanation requirement.

Credit Scoring and Loan Approval

This is the highest-volume use case across APAC banking. A credit decision model that rejects an application must produce a per-decision explanation the institution can defend to a regulator or a customer. Research on XAI for credit scoring (Springer, 2025) demonstrates that SHAP applied to Random Forest and gradient boosting models produces stable, auditable feature attributions. SHAP values show exactly which input variables drove the decision and by how much, a format that maps cleanly to adverse-action notice requirements.

Fraud Detection and AML

Anti-money laundering models flag transactions for review. A compliance analyst reviewing a flagged transaction needs to know why the model flagged it, not just that it did. Anchor explanations, which produce if-then rules such as “this transaction was flagged because amount > $50,000 AND recipient country = high-risk AND time of day = 02:00-04:00”, are directly readable by non-technical analysts and usable as audit evidence.

Insurance Underwriting and Claims Triage

LIME is well-suited to insurance use cases where the underlying model is a neural network and TreeSHAP is unavailable. In practice, teams building this typically find that the latency cost of generating LIME explanations for every claim is prohibitive, and batch explanation for overnight claim queues is the practical deployment pattern.

Choosing the Right Explainability Method for Your Stack

SHAP provides global and local explanations with strong theoretical guarantees. LIME is faster to implement but limited to local scope. Anchors produce human-readable rules best suited for compliance audits. InterpretML’s EBM gives you a glassbox model accurate enough to replace gradient boosting in many settings.

| Method | Key Strength | Best Used When |

|---|---|---|

| SHAP (TreeExplainer) | Fast for tree models; both local and global scope; game-theoretic consistency | Credit scoring or fraud models on XGBoost/LightGBM where auditable per-decision explanations are required |

| LIME (Local Surrogate) | Model-agnostic; works on any black-box; quick to prototype | Neural network models where TreeExplainer is unavailable and local, per-instance explanations suffice |

| Anchors (Alibi) | Produces if-then rules; directly readable by non-technical stakeholders | Compliance reviews and adverse-action notices where numeric feature weights are insufficient |

| InterpretML EBM | Glassbox model; accuracy comparable to gradient boosting; inherently interpretable | New model builds where interpretability can be designed in from the start |

The 2024 paper by Salih et al. in Advanced Intelligent Systems provides a rigorous comparison showing SHAP outperforms LIME in the presence of correlated features. For APAC credit datasets with high feature correlation between income, employment status, and loan history, SHAP is the safer default. LIME’s independence assumption between features introduces instability that is difficult to defend in a regulatory audit.

Production Architecture for XAI at Scale

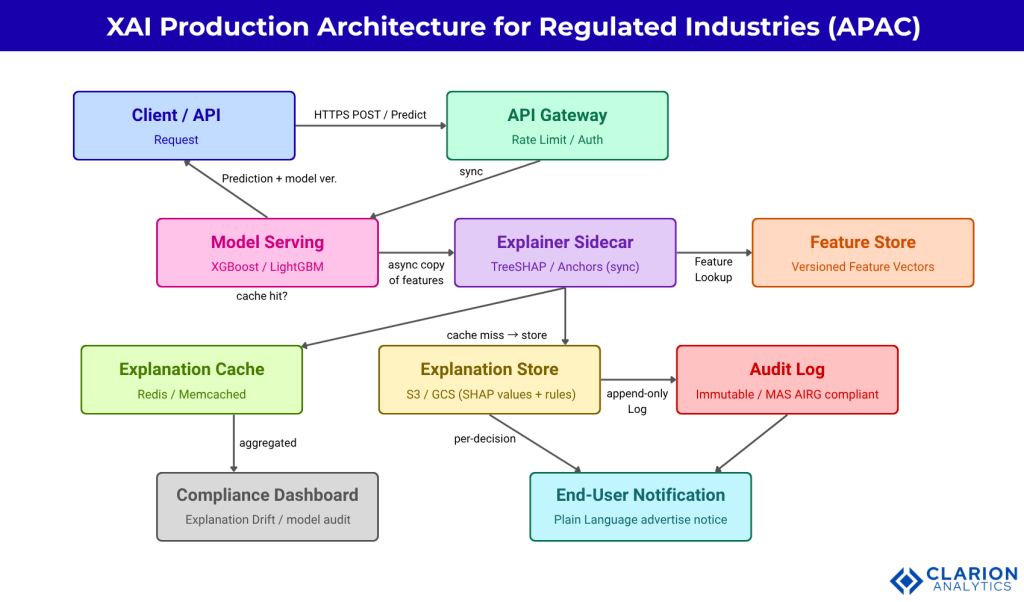

A production XAI pipeline has five layers: model serving, an asynchronous explainer sidecar, an explanation cache, an explanation store, and a human-readable delivery layer for compliance and end-user surfaces. The critical design principle is that the explanation computation must not sit on the synchronous inference path.

Figure: XAI Production Architecture for Regulated Industries in APAC. The synchronous inference path returns predictions without blocking on explanation compute. The async explainer sidecar writes SHAP values and anchor rules to the explanation store, with every write append-logged to satisfy MAS AIRG and RBI FREEAI audit requirements. The compliance dashboard aggregates explanation drift metrics, flagging cases where explanation patterns diverge from expected model behaviour.

The Async Sidecar Pattern

After the model returns a prediction, the inference service publishes the feature vector and prediction to a message queue. The explainer sidecar consumes this message, computes SHAP values or anchor rules, checks the explanation cache (Redis) for a cached result on an identical feature vector, and writes the explanation to the explanation store (S3 or GCS). The explanation is then available for the compliance dashboard and the adverse-notice delivery layer. This pattern keeps the inference API SLA clean.

Audit Log Design for Regulatory Compliance

Each explanation entry in the audit log must capture: the request ID, the model version, the feature vector hash, the explanation method used, the computed explanation payload, and the timestamp. The log must be append-only and immutable. For MAS AIRG, you need to demonstrate that an explanation for any past decision can be retrieved and cross-referenced with the model version that produced it. S3 Object Lock or an equivalent write-once store is the standard implementation pattern.

The explanation computation must never sit on the synchronous inference path. An async sidecar that writes to an immutable audit log is the production pattern that satisfies both latency SLAs and regulatory traceability requirements.

Implementation Guide: From Prototype to Production

Teams building XAI for production should start with TreeSHAP on tree models, cache explanations for identical feature vectors, and separate the explanation service from the inference path. In practice, teams that try to run KernelSHAP or standard LIME synchronously on every inference request routinely discover that explanation latency is 10-100x the prediction latency. The async sidecar pattern is not optional at production scale.

Snippet 1: TreeSHAP on LightGBM (Credit Scoring)

Source: shap/shap, census_income_lightgbm.ipynb (shap/shap, ~25,100 stars, actively maintained 2025)

TreeExplainer computes exact Shapley values for tree-based models in polynomial rather than exponential time. For a LightGBM credit model with 50 features, a single-instance explanation typically runs in under 5ms, making it viable for near-synchronous delivery. The SHAP values sum to the model’s log-odds output from its base value, giving a mathematically consistent feature attribution that regulators can verify.

Snippet 2: Anchor Tabular for Compliance-Readable Output

Source: SeldonIO/alibi, anchor_tabular_iris.ipynb (SeldonIO/alibi, ~2,400 stars, maintained 2025)

The anchor explanation returns a human-readable if-then rule with a precision score (how often the rule correctly predicts the outcome) and a coverage score (how broadly the rule applies). A compliance officer reviewing a loan rejection can read “income <= 28,000 AND employment = part-time” directly, without needing to interpret numeric feature weights. This solves the stakeholder translation problem that raw SHAP values create.

Anchor explanations solve the stakeholder translation problem that raw SHAP values cannot. An if-then rule with a precision score is auditable by a compliance officer. A vector of floating-point feature contributions is not.

Translating Explanations for Compliance, Legal, and End Users

Numeric SHAP values must be converted into plain-language adverse-action notices before reaching compliance officers, auditors, or customers. This translation step is as important as the technical explainer itself. A production XAI system needs three explanation layers targeting three different audiences.

Layer 1: Developer View (Raw Explanation Payload)

SHAP values, feature importance rankings, and Shapley interaction values stored in the explanation store. Used by data scientists for model debugging, drift detection, and bias monitoring. The payload includes model version, feature names, SHAP values, and base value.

Layer 2: Risk Officer and Compliance View

Top-N feature drivers ranked by absolute SHAP magnitude, displayed in the compliance dashboard. Each driver is labelled with the business-readable feature name and a directional indicator (increased or decreased approval probability). Explanation drift alerts fire when the top-driver distribution shifts more than a set threshold over a rolling window.

Layer 3: Customer-Facing Adverse Notice

Template-based natural language generation maps the top 3 SHAP drivers to regulatory-compliant adverse-action language. The CFA Institute (2025) notes that counterfactual explanations are particularly useful for customers: “If your monthly income were $2,000 higher, your application would have been approved.” These counterfactuals are generated using DiCE or alibi’s counterfactual explainers and delivered via the end-user notification layer.

Frequently Asked Questions

How do I meet MAS AIRG explainability requirements for my AI model?

MAS AIRG requires transparency and explainability as proportionate lifecycle controls. In practice this means: document the explainability method chosen and why it is appropriate for the model type, generate and store a per-decision explanation for every high-impact AI output, maintain an immutable audit log linking explanations to model versions, and demonstrate to MAS that the explanation can be retrieved and presented on request. MAS’s 12-month transition guidance means implementation planning should begin immediately.

What is the performance cost of running SHAP in a real-time API?

TreeSHAP on XGBoost or LightGBM models with under 100 features typically runs in 2-10ms per instance, making near-synchronous delivery feasible. KernelSHAP and standard LIME on deep neural networks can take 100-500ms per instance and should never run on the synchronous inference path. The correct production pattern is the async sidecar: return the prediction immediately, compute the explanation asynchronously, and store it for retrieval. Explanation caching for repeated feature vectors can reduce compute by 30-50 percent on high-volume endpoints.

When should I use SHAP instead of LIME for a production model?

Use SHAP when your model is a tree ensemble (XGBoost, LightGBM, Random Forest) and you need both local and global explanations. TreeSHAP provides exact, consistent explanations with polynomial-time complexity. Use LIME when your model is a neural network or any other non-tree architecture and local approximation is sufficient. Salih et al. (2024) show that SHAP is more stable than LIME in the presence of correlated features, a common condition in financial tabular data.

How do I make AI explanations understandable to compliance officers, not just data scientists?

Build a three-layer explanation architecture. The developer layer stores raw SHAP values. The compliance layer converts those values to ranked, business-readable feature drivers with directional indicators displayed in a dashboard. The customer layer uses template-based natural language generation to produce counterfactual adverse-action notices. Anchor explanations from the alibi library are also directly readable by non-technical audiences because they produce if-then rules rather than numeric weights.

Can I use XAI for generative AI or large language models in banking?

Gartner (2026) predicts that XAI criticality will drive LLM observability investments to 50 percent of GenAI deployments by 2028, up from 15 percent today. For LLMs in banking, XAI takes the form of attention visualisation, token-level SHAP attribution, and chain-of-thought logging. For regulated decisions, MAS and the FSI explicitly caution that black-box LLMs in high-stakes settings create a regulatory paradox. Interpretable-by-design models remain preferable for credit and risk applications.

Three Things to Build First

Three insights should anchor your implementation roadmap. First, separate the explanation path from the inference path from day one. Synchronous explanation at every inference call is a latency antipattern that kills your API SLA. Second, choose your explainer based on model architecture: TreeSHAP for tree ensembles, LIME for neural networks, Anchors for compliance-readable outputs. Third, build the three-layer translation stack so that explanations are usable by data scientists, risk officers, and customers simultaneously.

The regulatory timeline is set. MAS AIRG is in a 12-month implementation window. The RBI FREEAI framework is moving toward enforcement. APRA is watching how FAR-regulated entities handle AI decision accountability. The market trajectory confirms the direction: the explainable AI market is projected to grow from USD 6.2 billion in 2023 to USD 16.2 billion by 2028.

Build the audit log first. It is the foundation everything else depends on.

If your model cannot explain its most recent decision in plain language to the customer it affected, are you ready for the regulatory audit that is already scheduled?

Table of Content

- The Explainability Gap Is a Production Problem

- Why APAC Regulators Now Mandate It

- Where XAI Delivers the Most Value in Practice

- Choosing the Right Explainability Method for Your Stack

- Production Architecture for XAI at Scale

- The Async Sidecar Pattern

- Audit Log Design for Regulatory Compliance

- Implementation Guide: From Prototype to Production

- Translating Explanations for Compliance, Legal, and End Users

- Frequently Asked Questions

- Three Things to Build First