Computer vision quality control in manufacturing is the use of AI-powered cameras and deep learning models to automatically inspect products on a production line, detect surface and structural defects in real time, and trigger corrective actions without human involvement. Systems analyse thousands of images per hour, classify defect types with greater than 95% accuracy, and integrate with existing MES and SCADA platforms to close the loop between detection and process correction.

Why Manual Inspection Is Failing Your Production Line

Computer vision quality control is no longer a pilot program — it is a production-line requirement. Companies still relying on manual inspection lose nearly 20% of annual sales to poor quality costs, according to Jidoka Technologies and iFactory platform benchmarks (2026). AI vision systems now achieve 95 to 99% detection accuracy at 10,000 or more parts per hour, maintaining identical standards around the clock without fatigue or shift-to-shift variability.

The economics are shifting fast. The global quality control machine vision market stood at $2.3 billion in 2023 and is projected to reach $7.2 billion by 2028, according to ABI Research (2024). The trigger is straightforward: AI is doing what human inspectors biologically cannot. The human visual system was designed to scan landscapes, not to detect 50-micron scratches on metal surfaces at 120 parts per minute.

The gap between what inspectors are expected to catch and what they actually catch under real production conditions is enormous. That gap costs manufacturers millions, generates warranty claims, and exposes quality directors to regulatory risk. The shift to automated visual inspection closes it permanently.

“Human inspectors were not designed to catch 50-micron scratches at 120 parts per minute. AI vision systems were.”

The APAC Opportunity: Why This Region Moves Fastest

Asia Pacific is not just adopting AI-powered inspection, it is leading global adoption. Grand View Research (2025) projects that APAC will record the highest compound annual growth rate in AI manufacturing through 2030, driven by electronics, semiconductor, and automotive production across China, Japan, South Korea, and India.

The regional forces behind this acceleration are structural. Electronics and semiconductor manufacturing in Taiwan and South Korea demands zero-defect tolerances that human inspection cannot sustain at volume. China’s “Made in China 2025” initiative has pushed AI adoption across tier-one suppliers, while India’s rapid industrialisation in automotive and pharmaceutical manufacturing is generating demand for scalable quality automation. Fortune Business Insights (2025) confirms that APAC held 42.8% of the global AI manufacturing market share in 2025.

APAC plants also face unique challenges that make AI vision more urgent, not just more attractive. Multi-SKU lines, multilingual workforce environments, and variable ambient lighting conditions require adaptive inspection systems. Rule-based machine vision breaks down here. Deep learning models, trained on diverse production images, adapt to these conditions in ways that static threshold algorithms never can.

Five High-Impact Use Cases Across APAC Industry Verticals

The highest-ROI computer vision QC deployments in APAC share one characteristic: they target processes where the defect cost significantly exceeds the detection cost. Here are the five verticals where teams are generating the fastest payback.

Semiconductor wafer and chip inspection is the highest-stakes application. A semiconductor manufacturer in Taiwan increased throughput by 50% after deploying AI vision for wafer surface analysis, according to iFactory platform data (2026). At wafer prices of $500 to $5,000 each, catching a micro-crack before packaging is not a quality improvement; it is a direct financial recovery.

Automotive weld seam and paint analysis is the second major use case. High-resolution cameras paired with convolutional neural networks now outperform veteran inspectors in weld-seam analysis, catching micro-cracks as small as 50 micrometres. Manufacturing Technology Insights (2024) data shows AI vision cutting manual inspection time by 60% and rework by 23% in automotive settings.

PCB automated optical inspection (AOI) is the third. Research published on arXiv (Chou et al., 2025) demonstrates a YOLOv9 model integrated with SCADA achieving 91.3% detection accuracy at 146 milliseconds per frame on metal sheet and PCB defect data. Modern AI vision systems in electronics manufacturing detect assembly and soldering defects in under 200 milliseconds, enabling real-time corrections that prevent error propagation downstream.

Consumer goods packaging verification covers fill level, seal integrity, label placement, and date code legibility at conveyor speeds that human inspectors cannot match. This use case is common across FMCG plants in India, Indonesia, and Vietnam, where production runs are high-volume and rejection rates under legacy manual inspection are persistently above 2%.

Pharmaceutical blister pack and pill inspection is driven by regulatory compliance. Deloitte’s 2025 smart manufacturing survey notes that regulatory risk now sits alongside operational efficiency as a primary driver of AI adoption in quality-sensitive manufacturing. A DNN soft sensor evaluated in the printing and pharmaceutical packaging sector achieved 98.4% automated classification accuracy in peer-reviewed research (Sensors, 2019).

“The factories posting the biggest quality gains are not the ones with the most cameras, they are the ones that closed the loop between detection and process correction.”

System Architecture for Computer Vision Quality Control

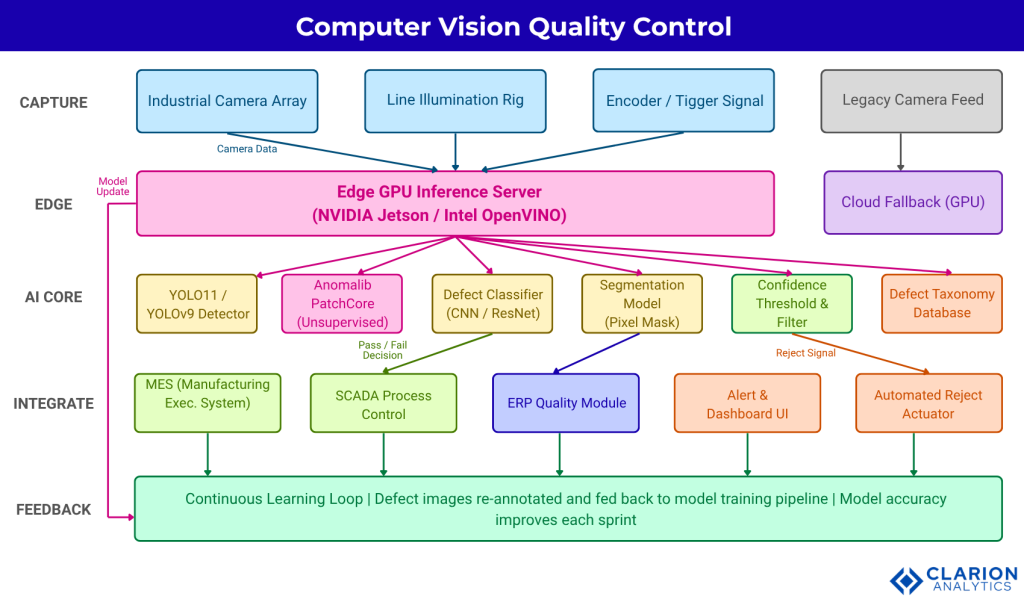

A production-ready computer vision QC architecture combines industrial cameras, edge GPU inference, a trained defect detection model, and a bidirectional API to MES and SCADA, all operating at sub-100ms latency to support real-time corrective action.

The architecture has five layers:

Layer 1 – Capture. Industrial line-scan or area-scan cameras, triggered by encoder signals at defined inspection intervals, capture high-resolution frames. Controlled illumination rigs, typically structured light or coaxial LED, eliminate shadow artefacts that confuse models.

Layer 2 – Edge Inference. Frames route to an on-premise edge GPU server (NVIDIA Jetson, Intel OpenVINO-optimised hardware) running the defect detection model. Processing occurs within the plant network. No production image leaves the facility.

Layer 3 – AI Inference. The deployed model, whether a YOLO-family object detector or an Anomalib unsupervised anomaly model, scores each frame. Defect bounding boxes, confidence scores, and defect class labels are produced in real time. A confidence threshold filter suppresses low-certainty predictions before any downstream action triggers.

Layer 4 – Integration and Action. Pass/fail decisions push to the Manufacturing Execution System (MES) via REST API or OPC-UA. SCADA systems receive process adjustment signals. Alert dashboards notify quality engineers. Automated reject actuators divert flagged parts without halting the line.

Layer 5 – Continuous Learning Feedback. All flagged images, together with their verified ground-truth labels from downstream quality checks, re-enter the model training pipeline. Each production sprint tightens the model. Defect-detection accuracy exceeds 98% at mature deployments, per Gartner (2025).

Fig. 1 Caption: Industrial cameras feed frames to edge GPU servers running YOLO or Anomalib models. Defect decisions route to MES and SCADA for process control, alert dashboards, and automated reject actuators. All flagged images re-enter the training pipeline, continuously improving model accuracy in a virtuous data loop that drives precision higher every quarter.

Choosing the Right AI Approach: A Comparison of Key Methods

The correct model architecture depends on data availability, latency requirements, and how frequently your product mix changes. There is no single right answer, but there is a clear decision framework.

| Approach | Key Strength | Best Used When |

|---|---|---|

| Supervised Object Detection (YOLO11, YOLOv9) | Highest accuracy with labelled defect data; real-time bounding-box output at sub-200ms | You have annotated defect image datasets and need per-frame localization at speed |

| Unsupervised Anomaly Detection (Anomalib PatchCore) | Requires zero defect labels; trains on defect-free images only; adapts to novel anomalies | New product lines, rare-defect categories, or high-mix low-volume production |

| Classical Machine Vision (rule-based thresholding) | Zero model training required; deterministic; fully explainable | Single-defect checks under stable, controlled lighting with no product variation |

For new product lines with no historical defect image library, unsupervised anomaly detection trains only on defect-free images and flags deviations, removing the annotation bottleneck entirely. Research from Huazhong University and Tsinghua (Cheng et al., arXiv 2025) confirms that the shift from closed-set to open-set defect detection frameworks is reducing annotation requirements while enabling recognition of novel anomaly types that labelled models would miss.

“The annotation bottleneck is the silent killer of computer vision projects in manufacturing. Unsupervised anomaly detection removes it completely.”

Implementation Roadmap: From Pilot to Production Scale

Implementation follows a predictable four-stage arc: define the defect taxonomy, collect and annotate baseline images, deploy a pilot on one line, then integrate with MES before scaling plant-wide.

In practice, teams building this typically find that the first 90 days are dominated not by model training but by camera positioning, lighting standardisation, and cleaning historical image data. The model is rarely the bottleneck. McKinsey’s lighthouse factory analysis (2023) reports that successful AI manufacturing deployments achieve two to three times productivity gains but only when implementation teams invest in the surrounding data infrastructure, not just the algorithm.

Snippet 1 – Supervised Defect Detection with YOLO11

Source: ultralytics/ultralytics – 125,000+ GitHub stars, actively maintained.

This snippet shows how a trained YOLO model loads in two lines and runs inference on a single camera frame. The results object returns bounding boxes, confidence scores, and class labels within milliseconds, ready to pass directly to a PLC or MES integration layer. A plant manager can see immediately that the AI runtime is not the complex part.

Snippet 2 – Unsupervised Anomaly Detection with Anomalib PatchCore

Source: openvinotoolkit/anomalib – 4,100+ GitHub stars, v2.2.0 released 2025.

This snippet demonstrates the cold-start solution for new product lines. The model trains only on defect-free images, learns what “good” looks like, and flags any deviation at inference. No labelled defect database is required. For APAC plants introducing new SKUs weekly, this eliminates the weeks of annotation work that block supervised model deployment.

“In manufacturing AI, the model is rarely the bottleneck – the camera calibration, lighting rig, and data pipeline almost always are.”

Three practical sequencing notes for plant managers. First, start with one defect type on one line. Scope creep in pilot programs is the most common cause of delayed production deployment. Second, allocate 25% of your first-year budget to data engineering API connectors to MES, image storage, and labelling tooling. Deloitte (2024) finds that successful smart manufacturing programs consistently over-invest here relative to initial plans. Third, involve quality engineers in model validation from day one. Their domain knowledge on defect severity classification translates directly into confidence threshold calibration, which determines false positive rates and operator trust.

Frequently Asked Questions

What is computer vision quality control in manufacturing?

Computer vision quality control uses AI-trained cameras and deep learning models to automatically detect product defects on a production line in real time. Cameras capture images at high speed; models classify and localise defects; and the system triggers reject actuators or process adjustments without human involvement. Detection accuracy typically ranges from 95% to 99% in production deployments.

How accurate is AI defect detection compared to human inspectors?

AI vision systems consistently outperform human inspectors under real production conditions. Human inspectors experience fatigue, attention drift, and shift-to-shift variability. AI systems maintain identical standards 24 hours a day. Mature deployments report detection accuracy above 98%, compared to typical human inspection accuracy of 75 to 85% on repetitive high-speed lines.

How long does it take to implement a visual inspection AI system?

A focused pilot covering one defect type on one line typically takes 60 to 90 days from camera installation to a validated model. Full plant-wide deployment, including MES and SCADA integration, ranges from six to twelve months, depending on data readiness and legacy infrastructure complexity. Payback periods on documented deployments range from 12 to 18 months.

Can AI quality control work without labelled defect images?

Yes. Unsupervised anomaly detection models, such as Anomalib PatchCore, train exclusively on defect-free images. The model learns a representation of normal product appearance and flags any deviation at inference time. This approach is especially valuable for new product lines with no historical defect dataset, or for rare-defect categories where annotated examples are scarce.

What is the ROI of computer vision quality control for APAC manufacturers?

ROI depends on defect cost, volume, and rework rates, but documented benchmarks are strong. Vision AI cuts manual inspection time by 60% and rework by 23%, per Manufacturing Technology Insights (2024). Deloitte research finds companies investing in AI see average first-year ROI of 17%, with top performers reaching 30% or more. Semiconductor manufacturers in Taiwan have reported 50% throughput gains from AI vision deployment.

Three Truths Every Quality Leader in APAC Should Act On

The evidence from across the region points to three conclusions that should shape your 2025 and 2026 quality investment decisions.

First, the accuracy gap between AI vision and human inspection is now so large that manual-only inspection is a competitive liability. Systems achieving 95 to 99% detection accuracy at 10,000 parts per hour are not experimental; they are deployed in high-volume APAC facilities today.

Second, the annotation barrier that blocked many early AI QC projects has a practical solution. Unsupervised anomaly detection removes the requirement for labelled defect data entirely, making AI vision deployable on day one of a new product launch, not six months after enough defect images have accumulated.

Third, APAC is the fastest-growing region for AI manufacturing adoption, which means competitors in your sector are already deploying these systems. Grand View Research (2025) projects the computer vision segment will grow the fastest of all AI-in-manufacturing technologies through 2030. The window for first-mover advantage in AI quality control is narrowing.

The question worth sitting with: if your best human inspector retired tomorrow, would your quality line hold?

“The quality leaders who will define APAC manufacturing in 2030 are the ones deploying computer vision on the line today, not the ones still evaluating it.”