On-premise LLM deployment is the practice of hosting and running large language models entirely within an organization’s own infrastructure, whether on bare-metal servers, a private data center, or an air-gapped environment with no external network access. It gives enterprises complete control over data residency, model weights, inference traffic, and audit trails, independent of any third-party cloud provider.

Why Enterprises Are Moving LLMs Off the Cloud

Every prompt sent to a cloud AI API crosses your network boundary. For most organizations, that is an acceptable trade-off. For a defense contractor analyzing ITAR-controlled engineering drawings, a hospital querying patient records, or a European bank processing transaction data under GDPR, it is not.

Gartner’s Predicts 2026: AI Sovereignty report (October 2025) projects that by 2030, more than 75% of European and Middle Eastern enterprises will geopatriate their virtual workloads to reduce geopolitical risk. Today that figure sits below 5%. The regulatory gap between those two numbers is where on-premise LLM deployment lives.

Deloitte’s State of AI in the Enterprise 2026 (September 2025), surveying 3,235 senior leaders across 24 countries, found that sovereign AI has become a board-level priority: organizations are embedding privacy, sovereignty, and security-by-design directly into their AI data strategy. Deloitte calls this a “unified, trusted data strategy” and frames it as indispensable for enterprises operating at scale.

The same pressure shows up in budgets. Deloitte’s AI Infrastructure Survey (December 2025) found that 86% of enterprise respondents expect AI infrastructure budgets to more than triple over the next three years. Over 70% plan to scale on-premise or edge AI deployments by 2028. That is not experimentation. That is a capital commitment.

“An on-premise LLM is not an infrastructure preference. For regulated industries, it is a legal requirement.”

Use Cases That Demand Private Deployment

The strongest candidates for on-prem LLM deployment are organizations where cloud data egress is either legally prohibited or operationally unacceptable. Three categories consistently emerge.

Defense and Government

Los Alamos National Laboratory (January 2025) chose to self-host LLMs rather than depend on Azure’s OpenAI API. Their reasoning was direct: “hosting our own services gives us the right security and compliance posture to be useful across the broad range of our work.” ITAR controls the export of defense-related technical data. A firewall rule allowing outbound HTTPS to a licensing server disqualifies a deployment from true air-gap status in most ITAR compliance frameworks.

Healthcare and Life Sciences

HIPAA requires that Protected Health Information (PHI) be processed only on systems with appropriate controls. A 2025 analysis of healthcare AI platforms notes that the EU AI Act (which came into force through 2024-2025) and the FDA’s evolving AI guidelines will require air-gapped deployments for the highest-risk clinical AI systems. With GDPR fines reaching 4% of global annual revenue, the cost of a misconfigured API call can be catastrophic.

Financial Services

European banks and asset managers operating under GDPR Article 46 data transfer restrictions cannot legally route customer financial data through U.S.-hosted cloud APIs without specific safeguards. In practice, private LLM deployment resolves that legal uncertainty by eliminating the transfer entirely. Enterprise Strategy Group (2025) research confirms that 71% of AI infrastructure now operates outside public cloud environments, driven primarily by financial sector demand.

The Reference Architecture for On-Prem LLM Stacks

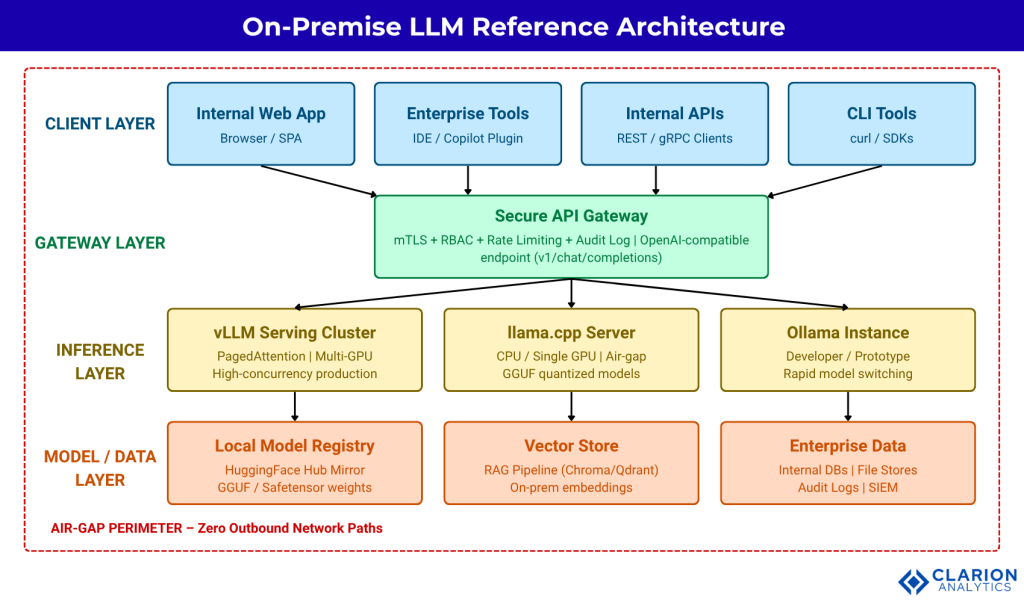

A production on-premise LLM stack has five layers: the hardware tier (GPU cluster or CPU nodes), the inference engine (vLLM, llama.cpp, or Ollama), a local model registry (an offline Hugging Face mirror or GGUF model store), an orchestration layer (Kubernetes or Docker Compose with GPU device plugins), and a secure API gateway enforcing mTLS, RBAC, rate limiting, and full audit logging.

Figure 1: On-Premise LLM Reference Architecture. Client applications (web apps, IDE plugins, CLI tools) send requests through a secure API gateway enforcing mTLS, RBAC, and audit logging. The gateway routes to one of three inference engines: vLLM for high-concurrency GPU production, llama.cpp for air-gapped CPU environments, and Ollama for prototyping. All engines pull model weights from a local registry and access enterprise data stores. The red dashed perimeter represents the air-gap boundary: zero outbound network paths are permitted.

“Most teams over-engineer the model layer and under-engineer the API gateway. The security perimeter is the gateway, not the GPU.”

Code Snippet 1: Zero-Egress Inference with Ollama

Source: ollama/ollama README.md (GitHub) – The following curl command sends a chat request to a locally running Ollama server. No API key, no outbound connection, no data leaving the perimeter.

bash

# Pull a model once (can be done offline via model file)

ollama pull llama3.1:8b

# Send a chat request to the local inference endpoint

curl http://localhost:11434/api/chat \

-d '{

"model": "llama3.1:8b",

"messages": [{ "role": "user", "content": "Summarize this contract clause." }],

"stream": false

}'Every component in this request is local: the model weights sit on disk, the server runs on localhost, and the response never touches an external network. This is the foundation of a compliant private LLM deployment.

Choosing Your Inference Engine: Tools and Trade-offs

Three open-source inference engines dominate on-prem LLM deployments. Each solves a different constraint. Choosing the wrong one for your workload costs weeks of rework.

| Tool | Key Strength | Best Used When |

|---|---|---|

| vLLM | PagedAttention delivers highest throughput for concurrent GPU workloads. OpenAI-compatible API. 79.6k GitHub stars. | You have multi-GPU hardware and need to serve hundreds of concurrent users in production. |

| llama.cpp | Pure C/C++, zero dependencies. Runs CPU-only. 100k+ GitHub stars (March 2026). GGUF quantization cuts VRAM 4x. | Air-gapped environments, CPU-only nodes, or edge servers where GPU availability is constrained. |

| Ollama | Docker-like model management, 171k GitHub stars. Fastest time-to-inference. Cross-platform. | Developer prototyping, single-user inference, or rapid model switching before committing to production stack. |

| TensorRT-LLM (NVIDIA) | Maximum raw throughput on NVIDIA H100/H200. Hardware-optimized CUDA kernels. | You are fully committed to NVIDIA infrastructure and need extreme throughput at scale. |

| SGLang | Structured output and multi-call programs; strong AMD GPU support. | Applications requiring constrained JSON outputs, tool calling, or multi-step agentic workflows. |

Code Snippet 2: Air-Gapped Batch Inference with vLLM

Source: vllm-project/vllm – examples/offline_inference/basic/basic.py (GitHub) – This script instantiates vLLM entirely from local model weights and processes a batch of prompts. No network calls occur after model load.

PagedAttention, vLLM’s core memory management innovation, allows this batch to run without memory fragmentation even across very long contexts. In production, replace the list with a queue-driven pipeline. The API remains identical.

Implementation Guidance: From Pilot to Production

In practice, teams that go straight from zero to a multi-GPU Kubernetes cluster spend three months debugging infrastructure rather than building product. A staged rollout avoids this.

Stage 1: Single-node Ollama. Pull the target model to one server, route a small internal use case through it, and validate that prompt quality meets your requirements. This takes days, not weeks.

Stage 2: Production inference with vLLM. Once model choice is confirmed, migrate to vLLM on a multi-GPU node. Research by Knoop and Holtmann (arXiv, 2026) shows that self-hosted inference costs $0.001-$0.04 per million tokens in electricity versus 40-200x more on cloud APIs. Hardware breaks even in under four months at 30 million tokens per day. Run benchmarks against your actual concurrency target before selecting GPU count.

Stage 3: Orchestration and hardening. Add Kubernetes with GPU device plugins, an OpenAI-compatible API gateway (nginx or Envoy with mTLS), role-based access control, and a prompt/completion audit log. Connect your vector store for RAG pipelines.

“Teams that skip the single-node pilot and go straight to a GPU cluster spend three months debugging infrastructure instead of building product.”

McKinsey’s April 2026 analysis of agentic AI infrastructure notes that over one-third of high performers commit more than 20% of their digital budgets to AI. Those budgets increasingly fund private infrastructure precisely because it eliminates the unpredictable token costs of cloud APIs.

Compliance, Security Hardening, and the Air-Gap Checklist

Calling a deployment “on-premise” while it phones home for license validation is not air-gapped. It is a compliance fiction. A genuinely compliant private LLM deployment requires several non-negotiable controls.

- Zero outbound network paths. No HTTPS to licensing servers, no DNS resolution to external resolvers, no NTP sync to public servers.

- Offline model weights loaded from encrypted portable media (USB drive, internal package registry, or airgapped NFS mount).

- Role-based access control on the inference API. Use mTLS client certificates, not API keys stored in environment variables.

- Full audit logging of every prompt and completion, with user identity, timestamp, and model version. Route logs to your internal SIEM.

- Vendor telemetry disabled. Review every inference engine’s documentation and environment variables for telemetry opt-out flags.

- Model update process via physical media only, with cryptographic verification of model file hashes before loading.

Security research by Balashov, Ponomarova, and Zhai (arXiv, 2025) demonstrates that multi-turn prompt chaining can exfiltrate sensitive corporate data from cloud-connected LLMs even with standard safety measures in place. On-premise deployment eliminates the network attack surface entirely. The residual risk is prompt injection within the perimeter, which you address with fine-grained access control and prompt sanitization at the API gateway layer.

“Calling a deployment ‘on-premise’ while it phones home for license validation is not air-gapped. It is a compliance fiction.”

Frequently Asked Questions

What hardware do I need to run an LLM on-premise?

A 7B to 13B parameter model runs on a single NVIDIA RTX 4090 (24GB VRAM) or equivalent. For 70B models, you need either a multi-GPU server (2x A100 80GB) or INT4 quantization via llama.cpp, which cuts a 70B model from 140GB to roughly 35GB. CPU-only inference on a 64-core server works for single-user workloads but is too slow for multi-user production.

Is on-premise LLM deployment cheaper than using cloud AI APIs?

Yes, at any meaningful volume. Research published on arXiv (2026) shows self-hosted inference costs $0.001-$0.04 per million tokens in electricity. Cloud APIs charge $2.50-$15.00 per million tokens. Hardware typically breaks even in under four months at 30 million tokens per day. Below that volume, cloud APIs remain more cost-effective when hardware cost is annualized.

Can I run a large language model in a fully air-gapped environment?

Yes. llama.cpp and Ollama both support fully air-gapped operation with no external network dependencies. You pre-load model weights onto encrypted drives, install the inference binary from a local package mirror, and serve requests entirely on localhost. The critical requirement is that no component, including license checks or telemetry, makes outbound network calls. Audit this with a network-level packet capture during setup.

Which compliance frameworks require on-premise AI deployment?

ITAR mandates that controlled technical data not traverse infrastructure accessible to foreign nationals or foreign-hosted systems, which effectively requires air-gapped deployment for many defense workloads. HIPAA does not prohibit cloud processing (a Business Associate Agreement is sufficient) but some healthcare organizations choose on-prem for the highest-sensitivity PHI. GDPR does not mandate on-prem but makes cross-border data transfers legally complex. CMMC Level 3 and FedRAMP High both strongly favor private infrastructure.

How do I update an on-premise LLM model without breaking production?

Use blue-green deployment. Maintain two named model endpoints (v1 pointing to the current model, v2 pointing to the candidate). Load the new model weights from offline media, run regression tests against your internal benchmark suite, then shift traffic at the API gateway. Keep the previous model weights on disk for at least 30 days to allow fast rollback. Automate hash verification of model files before each load to detect corruption.

Three Decisions That Define Your On-Prem AI Strategy

Three choices govern the success of any on-premise LLM deployment. First, choose your compliance posture before choosing your model. The difference between “on-prem” and “air-gapped” is not semantic; it determines which regulations you satisfy and which controls your security team must audit.

Second, size hardware against your actual concurrency target, not your peak fantasy. Most enterprise use cases run comfortably on a single 80GB A100 node for the first 12 months. Buy the minimal viable GPU cluster, prove the ROI, then expand.

Third, treat the API gateway as a first-class security control, not a routing afterthought. mTLS, RBAC, rate limiting, and audit logging at the gateway layer are what convert a raw inference engine into an enterprise-grade, compliance-auditable system.

The shift to private AI infrastructure is accelerating. Gartner (2025) predicts that by 2030, fragmented AI regulation will cover 75% of the world’s economies. The organizations that build sovereign AI infrastructure now will not be scrambling to retrofit compliance controls later.

The real question is not whether to move your LLMs on-premise. It is whether your team has the architecture clarity to do it without building a security liability in the process.