The Model Context Protocol (MCP) is an open standard that defines how AI agents built on large language models connect to, discover, and invoke external data sources and tools through a unified JSON-RPC 2.0 interface. Introduced by Anthropic in November 2024 and now governed by the Linux Foundation, MCP replaces bespoke per-system integrations with a single client-server contract, reducing an N×M integration problem to N+M. Any AI model supporting the protocol can reach any MCP-compatible service.

Why Every Integration You Build Today Is Technical Debt

AI agents fail in production not because of weak models, but because they cannot reliably connect to the data and tools they need. MCP solves this by providing a universal protocol layer that decouples agents from the systems they act on, making every integration reusable across all future agents you build.

Before MCP, connecting an AI agent to enterprise systems meant writing a custom connector for every data source. Anthropic described this as an “N×M” data integration problem: N agents multiplied by M tools, all needing pairwise integrations. A team building three agents that each needed access to five systems faced 15 separate integration projects. Add a new agent, and the cost compounds.

The stakes are real. According to Gartner (August 2025), 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from fewer than 5% in 2025. Without a standard integration layer, that growth generates exponential technical debt. MCP converts that N×M matrix into a simple N+M addition.

“The problem is never the model. The problem is everything the model needs to touch.”

What MCP Actually Is And Why It Is Not Just Another API Wrapper

MCP is a client-server protocol, not an API abstraction. It lets agents dynamically discover and invoke tools at runtime without pre-programmed integration logic; the agent asks “what can you do?” and the server answers with a typed, validated tool manifest.

Before MCP, AI tool integrations were vendor-specific and non-portable. A function-calling integration built for OpenAI’s API would not work with Claude or Gemini without a full rewrite. MCP breaks that lock-in. Adopted by OpenAI in March 2025 and Google DeepMind shortly after, MCP has become the industry-neutral standard for AI-to-tool communication.

The protocol is inspired by the Language Server Protocol (LSP), the standard that let any IDE support any programming language. MCP applies the same logic to AI agents: any compliant agent connects to any compliant server. As of December 2025, over 10,000 published MCP servers exist across the official registry, covering developer tools, CRMs, databases, cloud services, and more.

MCP Architecture: The Three-Layer Stack

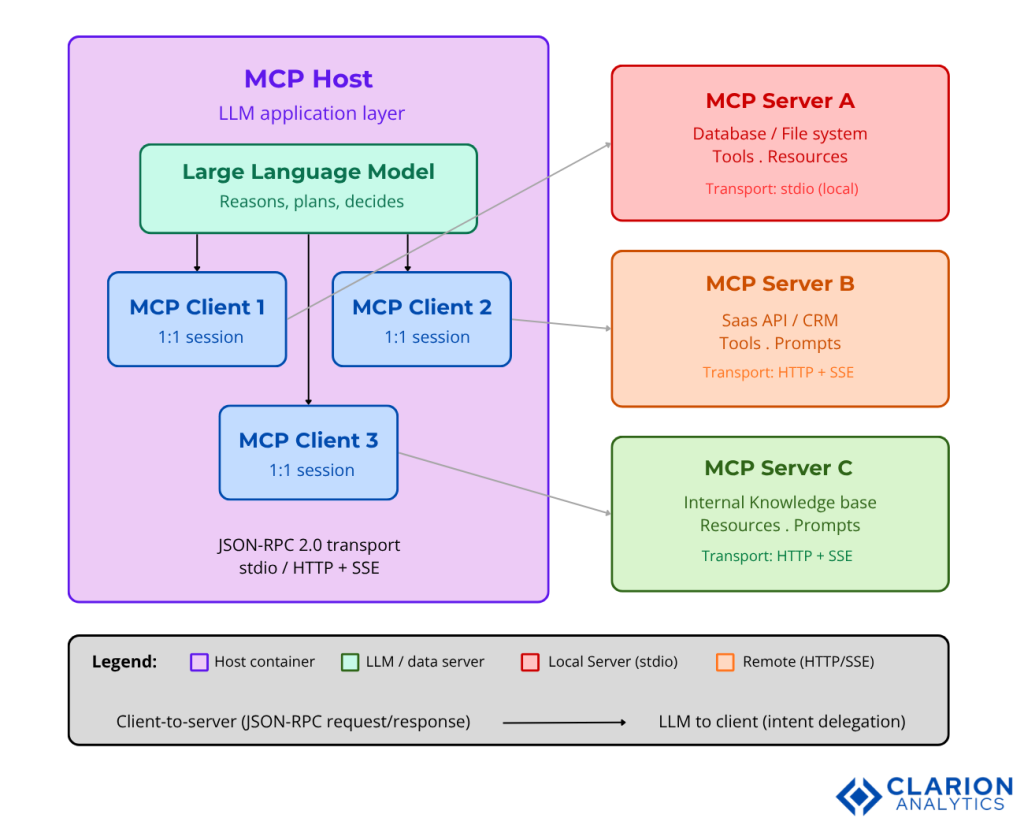

Every MCP deployment has three roles: Host (the LLM application), Client (the protocol intermediary), and Server (the capability provider). JSON-RPC 2.0 messages flow over stdio for local tools or HTTP with Server-Sent Events (SSE) for networked services.

Figure 1: The MCP Host contains the LLM and one or more MCP Clients. Each Client maintains a 1:1 connection to an MCP Server that exposes resources (read-only data), tools (executable functions), and prompts (reusable templates). A single host can connect to dozens of servers simultaneously, enabling multi-domain agent orchestration without rewriting integration code.

Code Snippet 1: Registering a Tool with the Python SDK

Source: modelcontextprotocol/python-sdk — 22,306 stars. The FastMCP server is the primary entrypoint for Python-based MCP server development.

The @mcp.tool() decorator handles JSON schema generation, parameter serialization, and MCP capability advertisement automatically. Developers write standard Python functions; the SDK handles the protocol. Four lines of Python produce a fully compliant, discoverable MCP tool, no manual schema writing, no transport code.

Real-World Use Cases: Where MCP Moves the Needle

MCP is production-ready for customer service automation, developer tooling, enterprise data retrieval, and multi-agent workflow orchestration. Organisations using MCP report faster agent deployment cycles because integrations are reusable across projects; build the tool once, inherit it in every future agent.

Customer service automation

According to Gartner (March 2025), agentic AI will autonomously resolve 80% of common customer service issues without human intervention by 2029, reducing operational costs by 30%. MCP servers exposing CRM records, ticket systems, and knowledge bases give customer agents the real-time context they need to handle complex cases without scripted fallbacks.

Developer tooling and coding agents

IDEs like Cursor, Windsurf, and VS Code now ship MCP support. GitHub’s MCP server allows Copilot Chat to read repositories, create issues, and trigger workflows directly from natural language prompts. The same server works in any MCP-compatible client.

Enterprise data retrieval

Microsoft Azure integrated MCP into the Azure AI Agent Service in May 2025, adding OAuth 2.1 authentication and enterprise-grade access controls. IBM created MCP tools for watsonx.ai including document library servers that connect agents to enterprise knowledge bases. The pattern: one MCP server per enterprise system, one standard for all agents to consume them.

Multi-agent orchestration

Research published in January 2026 on Context-Aware MCP (CA-MCP) demonstrated that adding a Shared Context Store reduced task execution time by 67% versus traditional MCP on the TravelPlanner benchmark (mean 13.5 seconds vs. 42 seconds). Agents stopped repeating context lookups; each server could read shared state and act autonomously without routing every step through the central LLM.

“MCP turns a one-off integration into reusable infrastructure that every future agent can inherit.”

MCP vs. Alternative Integration Approaches

Compared to vendor-specific function calling or custom REST connectors, MCP wins on reusability and ecosystem compatibility. The trade-off is a slightly higher initial setup cost for teams new to the client-server model, though the Python and TypeScript SDKs reduce that to minutes, not days.

| Approach | Key Strength | Best Used When | Security Profile |

|---|---|---|---|

| Model Context Protocol (MCP) | Universal standard — any agent connects to any server; integrations are reusable across AI platforms and teams | Building multi-agent systems or enterprise pipelines that will evolve over time; portability across AI vendors required | Strong when OAuth 2.1 and scoped permissions are applied; requires active tool-permission hygiene |

| Vendor-specific function calling (e.g. OpenAI tools API) | Tight integration with one vendor; well-documented within that ecosystem | Single-vendor deployment where deep platform integration matters more than portability | Managed by platform; limited cross-server attack surface but no portability |

| Custom REST / HTTP connectors | Maximum control over auth, caching, and retry logic; no protocol overhead | One-off integrations with stable, known APIs that will not scale beyond a single agent | Varies; full control but entire burden falls on the development team |

Table 1: Comparison of AI agent integration approaches. MCP’s primary advantage is portability, an MCP server built today works with every MCP-compatible AI platform.

Code Snippet 2: Connecting an MCP Client in TypeScript

Source: modelcontextprotocol/typescript-sdk – 11,958 stars. The official TypeScript SDK for MCP clients and servers, running on Node.js, Bun, and Deno.

The client connects to a running MCP server, negotiates capabilities, then calls any registered tool by name with typed arguments. The entire round-trip connection, session init, tool discovery, and invocation requires fewer than 15 lines of TypeScript. CTOs evaluating MCP for Node.js stacks can verify integration feasibility in an afternoon.

Implementing MCP in Production: What Teams Actually Face

The top implementation challenges are OAuth token management, tool poisoning attacks, and context window bloat from over-exposed tools. Teams that scope tool sets tightly and implement tool-level access controls ship more reliable agents and have a smaller attack surface to defend.

In practice, teams building MCP systems typically find that the first two weeks go smoothly, the SDKs handle the protocol and the reference servers provide working examples. Complexity arrives in week three, when the first production environment requires scoped authentication, tool permission matrices, and monitoring for anomalous tool invocation patterns. Plan for that investment upfront.

The practical implementation checklist that consistently works:

- Start with the official Python or TypeScript SDK; avoid re-implementing the protocol from scratch.

- Scope tool exposure: expose only the tools each agent needs, not your entire server capability list. Every additional tool in context increases token cost and attack surface.

- Implement OAuth 2.1 for any remote server; the Azure MCP integration ships this by default; other deployments must add it explicitly.

- Test with MCP Inspector (the official debugging UI in the typescript-sdk repo) before connecting to production clients.

- Validate server security using MCP-scan, an open-source tool that identifies tool-poisoning and prompt injection vulnerabilities in running servers.

“The S in MCP stands for security – treat every tool description as untrusted input until proven otherwise.”

Security Considerations You Cannot Skip

MCP’s tool-calling mechanism amplifies attack success rates by 23–41% compared to non-MCP baselines when security controls are absent. The three non-negotiable mitigations are explicit user consent flows, scoped tool permissions, and server identity verification.

A 2025 arXiv study by Xinyi Hou et al. conducted the first systematic security analysis of MCP, covering the full server lifecycle across four phases: creation, deployment, operation, and maintenance. The study identified tool poisoning, cross-server tool shadowing, and prompt injection via the sampling mechanism as the three leading attack vectors.

A complementary large-scale empirical study of 1,899 open-source MCP servers found that despite surpassing 8 million weekly SDK downloads, significant security and maintainability gaps persist in the community ecosystem. The lesson: always audit third-party MCP servers before connecting them to production agents.

The official MCP specification requires that hosts obtain explicit user consent before invoking any tool and that tool descriptions be treated as untrusted unless obtained from a verified server. Production-grade deployments should additionally implement rate limiting, access logs for every tool invocation, and anomaly detection for unexpected tool call sequences.

Frequently Asked Questions About MCP

How does Model Context Protocol work for AI agents?

MCP defines a client-server handshake where an AI agent (client) connects to a capability provider (server), requests a list of available tools or resources, then invokes them via JSON-RPC 2.0 messages. The agent never needs to know the underlying API; it discovers tools dynamically at runtime through a typed, schema-validated manifest. Communication flows over stdio for local processes or HTTP with SSE for remote services.

What is the difference between MCP and function calling?

Function calling is vendor-specific: a tool built for OpenAI’s API requires a complete rewrite to work with Claude or Gemini. MCP is vendor-neutral, any model that supports the protocol can discover and invoke any MCP server. The integration is written once and reused across every AI platform that adopts MCP, including OpenAI, Google DeepMind, and Microsoft Azure AI.

How do I build an MCP server for my application?

Install the Python SDK (pip install mcp) or TypeScript SDK (npm install @modelcontextprotocol/server). Define tool functions with the @mcp.tool() decorator or the server.tool() method. Run the server over stdio for local use or HTTP/SSE for remote access. Both SDKs handle all JSON-RPC serialization, capability negotiation, and schema validation automatically.

Is MCP production-ready for enterprise deployments?

Yes. Microsoft Azure integrated MCP into the Azure AI Agent Service in May 2025 with OAuth 2.1 authentication. IBM, Google Cloud, and AWS all support MCP-based agent deployments. Over 10,000 published MCP servers exist in the official registry. In December 2025, Anthropic donated MCP to the Agentic AI Foundation under the Linux Foundation, ensuring long-term neutral governance.

What are the main security risks in MCP deployments?

The three primary risks are tool poisoning, cross-server tool shadowing, and prompt injection via MCP’s sampling mechanism. According to security research published in 2025, MCP amplifies attack success rates by 23–41% when controls are absent. Mitigation requires scoped tool permissions, mandatory user consent before any tool execution, server identity verification, and ongoing auditing of third-party MCP servers.

Three Insights Every Engineering Team Should Take Forward

Three things stand out from everything covered here. First: MCP has won the protocol standards race. OpenAI, Google DeepMind, and Microsoft adopted it within months of release, and the Linux Foundation now governs it as neutral infrastructure. This is not a bet on Anthropic, it is a bet on an open standard with broad industry backing.

Second: the N×M integration problem is solved, but security must be engineered in from day one. The SDKs make the happy path effortless. The 23–41% amplification of attack success rates in poorly configured deployments is equally real. Security is not a phase two concern.

Third: the teams that invest in MCP now will inherit a compounding integration asset. Every MCP server your team builds today is automatically available to every agent you build tomorrow — whether that agent runs on Claude, GPT-5, or a model that does not exist yet.

What would your agent stack look like if every integration you built today was automatically available to every agent you build tomorrow?