Contact centre AI voice refers to conversational AI systems that handle inbound and outbound telephone interactions autonomously, using a pipeline of automatic speech recognition (ASR), a large language model (LLM) for dialogue management, and text-to-speech (TTS) synthesis. Unlike legacy IVR, these systems understand natural language, retrieve context from enterprise knowledge bases, and transfer to a human agent when the interaction exceeds their competency. They are deployed as a SIP endpoint or WebRTC session and operate at sub-second response latency.

Why the Contact Centre Can No Longer Wait

Contact centre AI voice has crossed from experiment to strategic imperative. According to Gartner (December 2024), 85% of customer service leaders will explore or pilot a customer-facing GenAI voicebot in 2025. The harder truth sits in the same data: only 5% have actually deployed one.

That gap matters because your customers are not waiting. A 2025 McKinsey study confirmed that live phone calls remain the most preferred support channel, including with customers aged 18 to 28. Telephone volume is not declining. The cost of handling it with humans alone is.

Gartner (March 2025) predicts agentic AI will autonomously resolve 80% of common service issues without human intervention by 2029, reducing operational costs by 30%. That is not a technology forecast. It is a margin forecast. Labour can represent up to 95% of contact centre costs. Automating even tier-1 queries generates outsized impact on the P&L.

“Eighty-five percent of customer service leaders are exploring voice AI, but fewer than 6% have achieved enterprise-wide scale. The gap between exploration and execution is where competitive advantage is won.”

Where Voice AI Is Winning Right Now: Five Enterprise Use Cases

The highest-ROI applications today are not experimental. They are production systems with measurable outcomes. McKinsey (March 2025) documents a leading energy company that reduced billing call volume by approximately 20% and shaved up to 60 seconds off customer authentication by integrating a voice AI system into its back-end call workflow.

The five use cases enterprises are successfully deploying at scale are: tier-1 query containment for billing and account status; outbound appointment confirmation and reminder calls; post-call summarisation reducing after-call work by up to 50%; intelligent skills-based routing using intent detection; and 24/7 FAQ handling for high-volume, low-complexity queries.

In practice, teams building this typically find the highest immediate ROI in after-call work automation. Observe.AI achieved a 50% reduction in after-call work for one healthcare client. The agent handles the conversation; the AI handles the documentation. That alone returns cost-of-deployment within a single quarter.

Comparison: IVR vs. Agent-Assist AI vs. Autonomous Voice AI

| Approach | Key Strength | Best Used When |

|---|---|---|

| Legacy DTMF IVR | Zero AI cost, proven reliability | Call volumes are low and interaction complexity is minimal |

| Agent-Assist AI | Human in control; AI surfaces answers in real time | Complex, regulated, or empathy-sensitive interactions; AI governance concerns are high |

| Autonomous Voice AI | Fully automated tier-1 handling; 40-80% containment rate | High-volume, repeatable queries where IVR deflection has plateaued |

| Hybrid (AI-first + escalation) | Best of both worlds; containment + human safety net | Enterprise deployments at scale; regulated industries; multilingual environments |

The Architecture Behind a Production Voice AI System

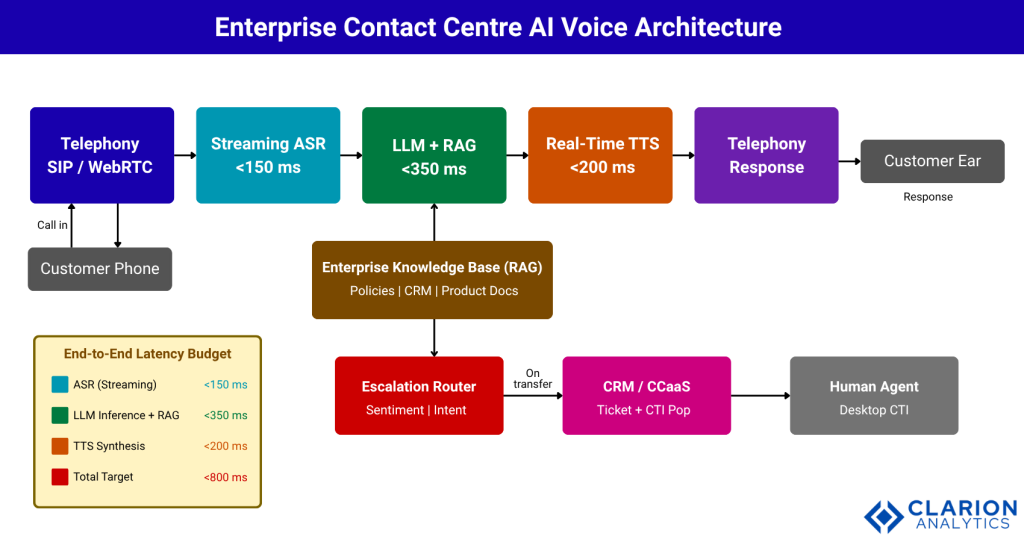

A production contact centre voice AI system is not a single model. It is a four-component pipeline that must operate in under 800 milliseconds end-to-end for the conversation to feel natural.

Research published on arXiv in 2025 by Ethiraj et al. demonstrates this architecture in a live telecom context: a streaming ASR engine transcribes audio in real time, a quantised LLM manages the dialogue and retrieves context via RAG from enterprise documents, and a real-time TTS synthesiser produces voice output. The entire pipeline runs with sub-second latency on GPU-optimised infrastructure.

“The question is no longer whether to replace your IVR with conversational AI. It is which architecture lets you do it without rebuilding your entire telephony stack.”

The four components map to four technology decisions: which ASR engine (Deepgram Nova-3, AssemblyAI, or cloud-native), which LLM (GPT-4o, Claude, or an open model), which TTS voice (ElevenLabs, Cartesia, or Azure Neural), and which telephony gateway (SIP trunk via Twilio or native CCaaS integration). Each is independently swappable, which is why open-source orchestration frameworks have become the preferred integration layer.

Fig 1: The ASR-LLM-TTS cascade architecture is now the dominant pattern for enterprise contact centre AI voice deployments. Latency budgets matter: ASR must complete in under 150ms, the LLM inference and RAG retrieval combined under 350ms, and TTS under 200ms, totalling well within the 800ms threshold for natural dialogue. The escalation router sits outside the main pipeline and is triggered by intent signals, not call duration. The CRM/CCaaS integration layer writes structured call data back to the agent’s desktop on transfer.

Source: pipecat-ai/pipecat – README quickstart example

The snippet below shows how the Pipecat open-source framework wires together a WebRTC transport, a TTS service, and an event handler that greets a caller by name. Swapping CartesiaTTSService for ElevenLabs or changing DailyTransport to a SIP telephony integration requires changing a single import.

Choosing Your Platform: Build, Buy, or Hybrid

Enterprises face three structural choices, and each has a distinct risk/reward profile. Choosing incorrectly is the most common reason voice AI programmes stall at pilot.

Build on open source (LiveKit Agents, Pipecat) gives engineering teams full control of data residency, latency tuning, and model selection. The cost is 3 to 6 months of platform engineering before a call is answered in production. This path is appropriate for enterprises with 50+ engineers, specific data sovereignty requirements, or a desire to differentiate on the voice experience itself.

Buy from a CCaaS vendor (Genesys AI, Five9 Intelligent Virtual Agent, NICE CXone) compresses time-to-value to 8 to 12 weeks and provides out-of-box integrations with Salesforce, ServiceNow, and major CRM platforms. The trade-off is limited model choice, vendor lock-in on the LLM layer, and per-minute pricing that erodes margins at volume.

Hybrid is the approach most large enterprises are now landing on. The telephony layer stays with the existing CCaaS vendor. The AI orchestration layer is custom-built on Pipecat or LiveKit, giving teams control of the model, the RAG knowledge base, and the escalation logic.

“The question is not just build vs. buy. It is: which architectural decision are you comfortable making irreversible?”

Source: livekit/agents – quickstart entrypoint

The snippet below shows a LiveKit Agents session wiring together Silero VAD, Deepgram STT, GPT-4o-mini, and ElevenLabs TTS into a single-file inbound call handler. This is production-deployable as written.

The Implementation Roadmap: From Pilot to Scale

McKinsey (August 2024) found that a European telecom deployed a gen-AI chatbot that eliminated wait times for approximately 20% of contact centre requests within just seven weeks. The organisation identified a single, contained use case, built the minimum viable agent, measured aggressively, then expanded. That sequence is not optional.

“Teams that skip the 60-day contained pilot consistently underestimate integration complexity. The pilot is not a delay. It is the fastest path to scale.”

Horizon 1 (0 to 60 days): Deploy on one high-volume, low-complexity call type such as billing balance queries or store-locator requests. Target 40% containment. Instrument every session for transcript quality, ASR error rate, and NPS delta.

Horizon 2 (3 to 6 months): Expand to 4 to 6 call types. Integrate the knowledge base via RAG. Connect to the CRM for personalisation. Target 55 to 65% containment across covered call types.

Horizon 3 (12 to 18 months): Retire the legacy IVR and replace with AI-first routing. Implement dynamic escalation logic triggered by detected customer sentiment. Target 70%+ containment with a measurably improved CSAT on AI-handled contacts.

According to Deloitte’s 2025 AI ROI research, only 6% of AI initiatives see payback in under a year. Contact centre voice AI, done with this phased discipline, is disproportionately represented in that 6%, because the call metrics are real, immediate, and undeniable to the CFO.

Measuring Success: CSAT, Containment, and Cost-Per-Contact

The executive dashboard for a voice AI programme needs exactly three metrics: containment rate, cost-per-contact, and CSAT trajectory.

“Containment rate, cost-per-contact, and CSAT trajectory are the only three metrics your board will care about twelve months after you go live.”

McKinsey’s generative AI productivity research documents that gen-AI-enabled agents achieved a 14% increase in issue resolution per hour and a 9% reduction in handle time in a 5,000-agent contact centre. Those figures translate directly: a 9% AHT reduction on 10 million calls per year at $3.50 cost-per-call is a $3.15 million annual saving before any containment gain is counted.

Academic work by Kaewtawee et al. (2025) evaluating a cloned voice AI agent against human agents confirms the practical boundary: AI approaches human performance on routine interaction criteria but underperforms in persuasion and complex objection handling. This defines the containment ceiling for current deployments and should directly inform your escalation rules.

CSAT outcome data from implementations reporting voice AI deployment shows a 27% improvement in CSAT scores in well-executed deployments, driven primarily by zero hold time and consistent first-response accuracy. The caveat: that gain disappears when the escalation experience is poor. The handoff from AI to human agent is the highest-risk moment in any voice AI journey and requires the same engineering attention as the AI itself.

Frequently Asked Questions

What is the difference between contact centre AI voice and traditional IVR?

Traditional IVR uses fixed DTMF menus and pre-recorded prompts. Contact centre AI voice uses natural language understanding to hear what a caller says, interpret their intent, retrieve context from enterprise systems, and respond in natural speech. AI voice can handle unscripted queries and multi-turn conversations that IVR menus cannot navigate.

How long does it take to deploy a voice AI system in an enterprise contact centre?

A contained pilot on one call type can be live in 6 to 8 weeks using a CCaaS-native AI module or an open-source framework like LiveKit Agents. Full IVR replacement with multi-domain coverage typically takes 12 to 18 months across three deployment horizons, with measurable ROI visible after the first 90 days.

What ROI should I expect from AI voice automation in my contact centre?

McKinsey (2024) documents 9% handle-time reduction and 14% resolution-per-hour improvement in live deployments. A leading energy company reduced billing call volume by approximately 20% within months of deployment. Containment rates of 40 to 70% on covered call types are achievable within 6 months, depending on call complexity.

How does voice AI handle accent diversity and noisy call environments?

Modern ASR engines such as Deepgram Nova-3 and AssemblyAI Conformer-1 are trained on diverse multilingual and accent-varied datasets. Research by Wei et al. (2024) on cross-modal contextual ASR shows meaningful accuracy gains in conversational settings. Noise cancellation, typically a pre-processing step before ASR, is now available as a real-time plugin in frameworks like LiveKit.

When should a voice AI agent hand off to a human agent?

Escalation should be triggered by detected sentiment signals (frustration, distress), intent-type mismatch (query type not within the AI’s training domain), explicit customer requests for a human, and defined regulatory triggers such as fraud claims or medical queries. Escalation logic should be coded as deterministic rules, not left to the LLM’s discretion.

The Decision Your Competitors Are Already Making

Three insights define the state of enterprise contact centre AI voice in 2025. First, the technology is ready: academic research and open-source frameworks have closed the gap between prototype and production. Second, the ROI is real but requires phased discipline: McKinsey’s documented case studies show measurable gains in handle time and containment, but only for organisations that ran contained pilots before scaling. Third, the architecture decision is reversible if you build on open-source orchestration, but near-permanent if you lock into a single CCaaS vendor’s AI module.

The one question every CXO should ask before next quarter: if your competitors deploy contact centre voice AI on their highest-volume call types this year, how many months before you lose the cost or experience advantage that lets you close business against them?